AMD Confirms That SmartShift Tech Only Shipping in One Laptop For 2020

by Ryan Smith on June 5, 2020 5:00 PM EST

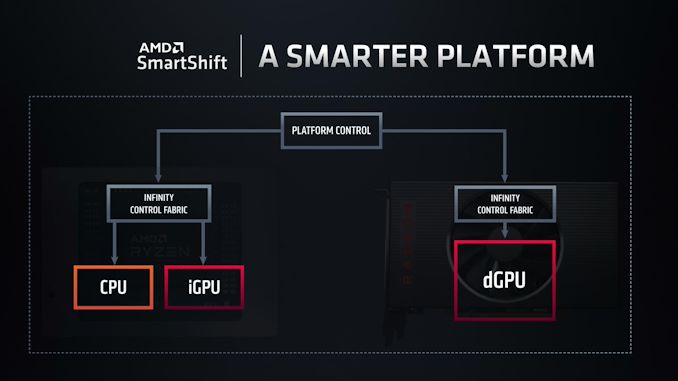

Launched earlier this year, AMD’s Ryzen 4000 “Renoir” APUs brought several new features and technologies to the table for AMD. Along with numerous changes to improve the APU’s power efficiency and reduce overall idle power usage, AMD also added an interesting TDP management feature that they call SmartShift. Designed for use in systems containing both an AMD APU and an AMD discrete GPU, SmartShift allows for the TDP budgets of the two processors to be shared and dynamically reallocated, depending on the needs of the workload.

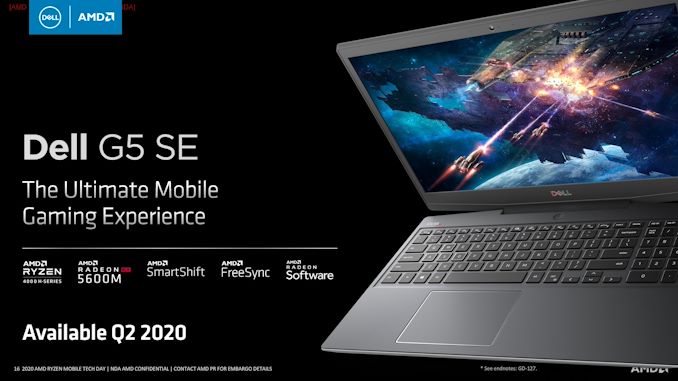

As SmartShift is a platform-level feature that relies upon several aspects of a system, from processor choice to the layout of the cooling system, it is a feature that OEMs have to specifically plan for and build into their designs. Meaning that even if a laptop uses all AMD processors, it doesn’t guarantee that the laptop has the means to support SmartShift. As a result, only a single laptop has been released so far with SmartShift support, and that’s Dell’s G5 15 SE gaming laptop.

Now, as it turns out, Dell’s laptop will be the only laptop released this year with SmartShift support.

In a comment posted on Twitter and relating to an interview given to PCWorld’s The Full Nerd podcast, AMD’s Chief Architect of Gaming Solutions (and Dell alumni) Frank Azor has confirmed that the G5 15 SE is the only laptop set to be released this year with SmartShift support. According to the gaming frontman, the roughly year-long development cycle for laptops combined with SmartShift’s technical requirements meant that vendors needed to plan for SmartShift support early-on. And Dell, in turn, ended up being the first OEM to jump on the technology, leading to them being the first laptop vendor to release a SmartShift-enabled laptop.

It's a brand new technology and to @dell credit they jumped on it first. I explained reasons why during my interview with @pcworld @Gordonung @BradChacos No more SmartShift laptops are coming this year but the team is working hard on having more options ASAP for 2021.

— Frank Azor (@AzorFrank) June 4, 2020

Azor’s comment further goes on to confirm that AMD is working to get more SmartShift-enabled laptops on the market in 2021; there just won’t be any additional laptops this year. Which leaves us in an interesting situation where, Dell, normally one of AMD's more elusive partners, has what's essentially a de facto exclusive on the tech for 2020.

Source: Twitter

54 Comments

View All Comments

Rookierookie - Saturday, June 6, 2020 - link

Now I actually wonder if AMD hiring Azor had something to do with Dell getting ahead in the SmartShift game.zodiacfml - Sunday, June 7, 2020 - link

Still not clear how Smartshift works. Higher end laptops somehow manages this by having one or two heatpipes connecting the CPU and GPU, this way cooling full system cooling is partly shared between the processorsSpunjji - Monday, June 8, 2020 - link

In the scenario you just described, SmartShift helps by tweaking the CPU and GPU to give you the maximum possible performance within the limits of the shared cooling and power delivery.eastcoast_pete - Sunday, June 7, 2020 - link

@Ryan: Thanks for the information! Two questions, appreciate any information:1. How much power does the integrated graphics of Renoir pull at maximum GPU load, and how badly is that eating into the thermal budget of a 4800h or 4800u?

2. Is Speedshift at risk of being mainly a way to "cheapen out" on overall cooling capability? I am a bit wary of Dell being the pioneer here, as some of my past (Intel + NVIDIA) Dell laptops looked great and had promising specs, but throttled fast and furious if I really challenged the dGPU. Fool me once and all that...

CiccioB - Sunday, June 7, 2020 - link

It is not surprising that this technology is not supported.It is not because it doesn't work or it s not useful.

It is only because NO OEM want to install a AMD dGPU into their laptops: they are too much power hungry with respect to their performance. Any Pascal or Turing GPU do better than AMD ones, even if they are on 7nm.

See what Nvidia provides in their Studio labeled laptops with GPU using only 80-100W.

AMD is still way behind Nvidia in power efficiency and this is the main reason why there is only a humble number of laptops with AMD GPUs. And all cheap class. And it has been a long time, 10 years or more, this is so.

AMD should really create a completely new architecture that can compete in terms of power consumption and perf/transistor. Navi is not near that at all. Let's see what BigNavi is bringing with the promise of 50% better perf/watt, hoping it is not only an advantage brought by a new PP and only in some circumstances.

alufan - Sunday, June 7, 2020 - link

lets see what they both bring to the table later this year Lisa has a knack of doing what she says she willCiccioB - Monday, June 8, 2020 - link

Up to now in the GPU market they failed to keep all promises they announced.The actual scenery where no AMD dGPU are preferable over Nvidia ones in mobile market is quite self explanatory.

While in desktop market AMD can play with price over efficiency, even cutting prices before launching the product, in mobile market they cannot do anything for making their GPU preferable, even if they give them away for free.

Nvidia is already ahead in energy efficiency with 12nm (that's 16+nm), I can't see a reason while they can't be better than AMD at 7nm seen what AMD has done up to now with this PP, see Vega VII (what a fail) and Navi.

Spunjji - Monday, June 8, 2020 - link

RDNA kept all of its promises on release. No reason so far to assume RDNA2 will be different.Vega VII was a specific product for a specific market and did okay in that regard. As a simple die shrink it got better performance and power than its 14nm predecessor. Not sure how you regard that as a failure.

CiccioB - Monday, June 8, 2020 - link

RDNA did not maintain anything because AMD simply did not say anything about what they were trying to obtain from GCN evolution. Probably previous generation failures taught something.I remember the promised 2.5x better efficiency of Polaris versus GCN1.2 and we end with a worse efficient GPU that had to be overclocked to have the performances of a 20% smaller one. Going beyond the PCI specification of power consumption, showing the final product was not what was intended originally. And the thing got even worse with the further OC needed to show that that sh*t could be better than the smaller chip made by Nvidia. Consuming twice the power.

I can remember the hype and promises on what Vega should have been, better than the Titan X (yes, there are sentences made by AMD marketing about it) how that would have changed the market. And I can clearly remember what AMD had to do to sell it, that is cutting price before launching and giving away professional app accelerated drivers. That selling it in the consumer market at a loss.

Yes, that was changing the market. Making them loose money.

Should I remind you of Fiji?

You would describe a 7nm 300W power hungry board with the computation capacity of Vega VII a successful product?

At 300W and 7nm Nvidia simply rolls over that useless piece of silicon. Ampere just demonstrated it.

And RDNA is good because it is cheap. With the same number of transistor of the 1080Ti and the same power budget, it can't beat it. 7nm vs 16nm. 3 years later. With no new feature supported with respect to Pascal. It is just Pascal shrinked and made wrong.

So why you think OEM should prefer RDNA over Turing which is the right Pascal evolution made on a node that does not even allow transistor shrinking? What are you trying to defend?

OEM knows that a7 7nm Nvidia is going to get rid of AMD dGPU on mobile market. They already do this at 12nm. At 7nm they will simply blow AMD away. They are simply avoiding to waste time and money on an already dead horse. In a market that is very competitive, never like before.

AMD dGPU are good on Apple devices that are for the Dandy and IT ignorant user that likes the design more than the actual performance of the device it has paid so much.

Keep it there, and be happy of that market share.

Yes, again, let's see next generation, the one that will make #poorvolta real... despite we are now approaching the second generation beyond Volta...

Spunjji - Monday, June 8, 2020 - link

Pascal does not have better power efficiency than RDNA. RDNA's absolute efficiency is, in fact, about about the same as Turing when run at sensible clocks (see the vanilla 5700 on desktop). Turing is more efficient on an architectural level, but it's the end result (architecture + process) that matters for system design, and they're about even in that regard.If power efficiency were the only reason for the lack of high-end AMD GPUs, it wouldn't make sense for integrators to use the low-end AMD GPUs either - they could use an equivalent Nvidia GPU and save weight and money on cooling at a really cost-critical end of the market. That's not the case, so clearly there's a little more to it.