Storage Matters: Why Xbox and Playstation SSDs Usher In A New Era of Gaming

by Billy Tallis on June 12, 2020 9:30 AM EST- Posted in

- SSDs

- Storage

- Microsoft

- Sony

- Consoles

- NVMe

- Xbox Series X

- PlayStation 5

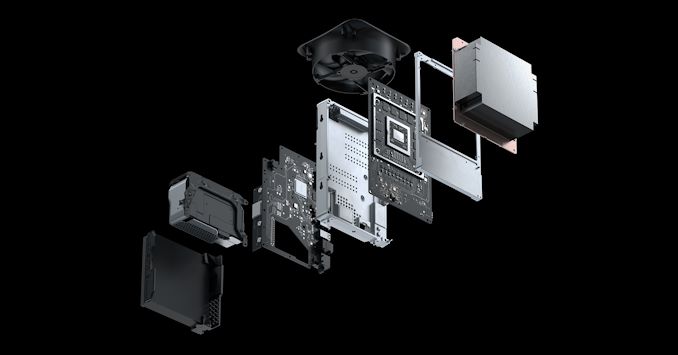

A new generation of gaming consoles is due to hit the market later this year, and the hype cycle for the Xbox Series X and Playstation 5 has been underway for more than a year. Solid technical details (as opposed to mere rumors) have been slower to arrive, and we still know much less about the consoles than we typically know about PC platforms and components during the post-announcement, pre-availability phase. We have some top-line performance numbers and general architectural information from Microsoft and Sony, but not quite a full spec sheet.

The new generation of consoles will bring big increases in CPU and GPU capabilities, but we get that with every new generation and it's no surprise when console chips get the same microarchitecture updates as the AMD CPUs and GPUs they're derived from. What's more special with this generation is the change to storage: the consoles are following in the footsteps of the PC market by switching from mechanical hard drives to solid state storage, but also going a step beyond the PC market to get the most benefit out of solid state storage.

Solid State Drives were revolutionary for the PC market, providing immense improvements to overall system responsiveness. Games benefited mostly in the form of faster installation and level load times, but fast storage also helped reduce stalls and stuttering when a game needs to load data on the fly. In recent years, NVMe SSDs have provided speeds that are on paper several times faster than what is possible with SATA SSDs, but for gamers the benefits have been muted at best. Conventional wisdom holds that there are two main causes to suspect for this disappointment: First, almost all games and game engines are still designed to be playable off hard drives because current consoles and many low-end PCs lack SSDs. Game programmers cannot take full advantage of NVMe SSD performance without making their games unplayably slow on hard drives. Second, SATA SSDs are already fast enough to shift the bottleneck elsewhere in the system, often in the form of data decompression. Something aside from the SSD needs to be sped up before games can properly benefit from NVMe performance.

Microsoft and Sony are addressing both of those issues with their upcoming consoles. Game developers will soon be free to assume that users will have fast storage, both on consoles and on PCs. In addition, the new generation of consoles will add extra hardware features to address bottlenecks that would be present if they were merely mid-range gaming PCs equipped with cutting-edge SSDs. However, we're still dealing with powerful hype operations promoting these upcoming devices. Both companies are guilty of exaggerating or oversimplifying in their attempts to extol the new capabilities of their next consoles, especially with regards to the new SSDs. And since these consoles are still closed platforms that aren't even on the market yet, some of the most interesting technical details are still being kept secret.

The main source of official technical information about the PS5 (and especially its SSD) is lead designer Mark Cerny. In March, he gave an hour-long technical presentation about the PS5 and spent over a third of it focusing on storage. Less officially, Sony has filed several patents that apparently pertain to the PS5, including one that lines up well with what's been confirmed about the PS5's storage technology. That patent discloses numerous ideas Sony explored in the development of the PS5, and many of them are likely implemented in the final design.

Microsoft has taken the approach of more or less dribbling out technical details through sporadic blog posts and interviews, especially with DigitalFoundry (who also have good coverage of the PS5). They've introduced brand names for many of their storage-related technologies (eg. "Xbox Velocity Architecture"), but in too many cases we don't really know anything about a feature other than its name.

Aside from official sources, we also have leaks, comments and rumors of varying quality, from partners and other industry sources. These have definitely helped fuel the hype, but with regards to the console SSDs in particular, these non-official sources have produced very little in the way of real technical details. That leaves us with a lot of gaps that require analysis of what's possible and probable for the upcoming consoles to include.

What do we know about the console SSDs?

Microsoft and Sony are each using custom NVMe SSDs for their consoles, albeit with different definitions of "custom". Sony's solution aims for more than twice the performance of Microsoft's solution and is definitely more costly even though it will have the lower capacity. Broadly speaking, Sony's SSD will offer similar performance to the high-end PCIe 4.0 NVMe SSDs we expect to see on the retail market by the end of the year, while Microsoft's SSD is more comparable to entry-level NVMe drives. Both are a huge step forward from mechanical hard drives or even SATA SSDs.

| Console SSD Confirmed Specifications | |||

| Microsoft Xbox Series X |

Sony Playstation 5 |

||

| Capacity | 1 TB | 825 GB | |

| Speed (Sequential Read) | 2.4 GB/s | 5.5 GB/s | |

| Host Interface | NVMe | PCIe 4.0 x4 NVMe | |

| NAND Channels | 12 | ||

| Power | 3.8 W | ||

The most important and impressive performance metric for the console SSDs is their sequential read speed. SSD write speed is almost completely irrelevant to video game performance, and even when games perform random reads it will usually be for larger chunks of data than the 4kB blocks that SSD random IO performance ratings are normally based upon. Microsoft's 2.4GB/s read speed is 10–20 times faster than what a mechanical hard drive can deliver, but falls well short of the current standards for high-end consumer SSDs which can saturate a PCIe 3.0 x4 interface with at least 3.5GB/s read speeds. Sony's 5.5GB/s read speed is slightly faster than currently-available PCIe 4.0 SSDs based on the Phison E16 controller, but everyone competing in the high-end consumer SSD market has more advanced solutions on the way. By the time it ships, the PS5 SSD's read performance will be unremarkable – matched by other high-end SSDs – except in the context of consoles and low-cost gaming PCs that usually don't have room in the budget for high-end storage.

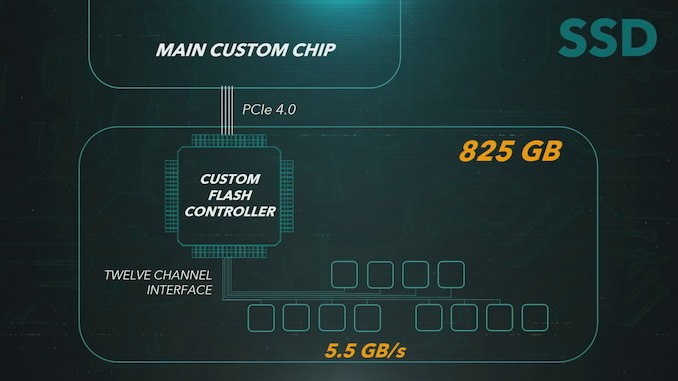

Sony has disclosed that their SSD uses a custom controller with a 12-channel interface to the NAND flash memory. This seems to be the most important way in which their design differs from typical consumer SSDs. High-end consumer SSDs generally use 8-channel controllers and low-end drives often use 4 channels. Higher channel counts are more common for server SSDs, especially those that need to support extreme capacities; 16-channel controllers are common and 12 or 18 channel designs are not unheard of. Sony's use of a higher channel count than any recent consumer SSD means their SSD controller will be uncommonly large and expensive, but on the other hand they don't need as much performance from each channel in order to reach their 5.5GB/s goal. They could use any 64-layer or newer TLC NAND and have adequate performance, while consumer SSDs hoping to offer this level of performance or more with 8-channel controllers need to be paired with newer, faster NAND flash.

The 12-channel controller also leads to unusual total capacities. A console SSD doesn't need any more overprovisioning than typical consumer SSDs, so 50% more channels should translate to about 50% more usable capacity. The PS5 will ship with "825 GB" of SSD space, which means we should see each of the 12 channels equipped with 64GiB of raw NAND, organized as either one 512Gbit (64GB) die or two 256Gbit (32GB) dies per channel. That means the nominal raw capacity of the NAND is 768GiB or about 824.6 (decimal) GB. The usable capacity after accounting for the requisite spare area reserved by the drive is probably going to be more in line with what would be branded as 750 GB by a drive manufacturer, so Sony's 825GB is overstating things by about 10% more than normal for the storage industry. It's something that may make a few lawyers salivate.

It's probably worth mentioning here that it is unrealistic for Sony to have designed their own high-performance NVMe SSD controller, just like they can't do a CPU or GPU design on their own. Sony had to partner with an existing SSD controller vendor and commission a custom controller, probably assembled largely from pre-existing and proven IP, but we don't know who that partner is.

Microsoft's SSD won't be pushing performance at all beyond normal new PC levels now that OEMs have moved beyond SATA SSDs, but a full 1TB in a PC priced similarly to consoles would still be a big win for consumers. Multiple sources indicate that Microsoft is using an off-the-shelf SSD controller from one of the usual suspects (probably the Phison E19T controller), and the drive itself is built by a major SSD OEM. However, they can still lay claim to using a custom form factor and probably custom firmware.

Neither console vendor has shared official information about the internals of their SSD aside from Sony's 12-channel specification, but the capacities and performance numbers give us a clue about what to expect. Sony's pretty much committed to using TLC NAND, but Microsoft's lower performance target is down in the territory where QLC NAND is an option: 2.4GB/s is a bit more than we see from current 4-channel QLC drives like the Intel 665p (about 2GB/s) but much less than 8-channel QLC drives like the Sabrent Rocket Q (rated 3.2GB/s for the 1TB model). The best fit for Microsoft's expected performance among current SSD designs would be a 4-channel drive with TLC NAND, but newer 4-channel controllers like the Phison E19T should be able to hit those speeds with the right QLC NAND. Either console could conceivably in the future get a double-capacity version that uses QLC NAND to reach the same read performance of the original models.

DRAMless, but that's OK?

Without performance specs for writes or random reads, we cannot rule out the possibility of either console SSD using a DRAMless controller. Including a full-sized DRAM cache for the flash translation layer (FTL) tables on a SSD primarily helps performance in two ways: better sustained write speeds when the drive's full enough to require a lot of background work shuffling data around, and better random access speed when reading data across the full range of the drive. Neither of those really fits the console use case: very heavily read-oriented, and only accessing one game's dataset at a time. Even if game install sizes end up being in the 100-200GB range, at any given moment the amount of data used by a game won't be more than low tens of GB, and that is easily handled by DRAMless SSDs with a decent amount of SRAM on the controller itself. Going DRAMless seems very likely for Microsoft's SSD, and while it would be very strange in any other context to see a 12-channel DRAMless controller, that option does seem to be viable for Sony (and would offset the cost of the high channel count).

The Sony patent mentioned earlier goes in depth on how to make a DRAMless controller even more suitable for console use cases. Rather than caching a portion of the FTL's logical-to-physical address mapping table in on-controller SRAM, Sony proposes making the table itself small enough to fit in a small SRAM buffer. Mainstream SSDs have a ratio of 1 GB of DRAM for each 1 TB of flash memory. That ratio is a direct consequence of the FTL managing flash in 4kB chunks. Having the FTL manage flash in larger chunks directly reduces the memory requirements for the mapping table. The downside is that small writes will cause much more write amplification and be much slower. Western Digital sells a specialized enterprise SSD that uses 32kB chunks for its FTL rather than 4kB, and as a result it only needs an eighth the amount of DRAM. That drive's random write performance is poor, but the read performance is still competitive. Sony's patent proposes going way beyond 32kB chunks to using 128MB chunks for the FTL, shrinking the mapping table to mere kilobytes. That requires the host system to be very careful about when and where it writes data, but the read performance that gaming relies upon is not compromised.

In short, while the Sony SSD should be very fast for its intended purpose, I'm going to wager that you really wouldn't want it in your Windows PC. The same is probably true to some extent of Microsoft's SSD, depending on their firmware tuning decisions.

Expandability

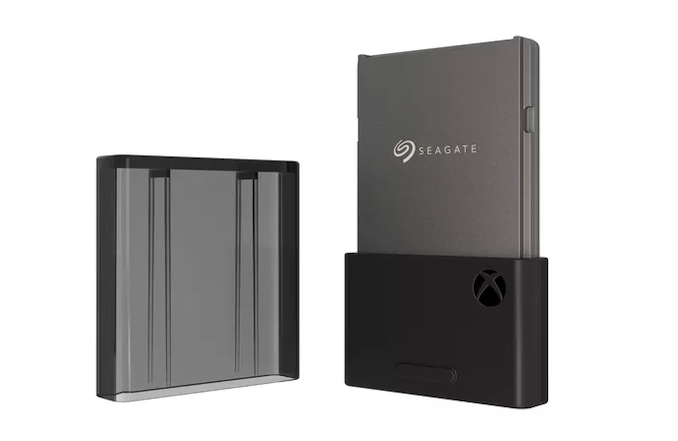

Both Microsoft and Sony are providing expandability for the NVMe storage of their upcoming consoles. Microsoft's solution is to re-package their internal SSD into a custom removable form factor reminiscent of what consoles used back when memory cards were measured in MB instead of TB and before USB flash drives were ubiquitous. Since it uses all the same components, this expansion card will be functionally identical to the internal storage. The downside is that Microsoft will control the supply and probably pricing of the cards; currently Seagate is the only confirmed partner for selling these proprietary expansion cards.

Sony is taking the opposite approach, by giving users access to a standard M.2 PCIe 4.0 slot that can accept aftermarket upgrades. The requirements aren't entirely clear: Sony will be doing compatibility testing with third-party drives in order to publish a compatibility list, but they haven't said whether drives not on their approved list will be rejected by the PS5 console. To make it onto Sony's compatibility list, a drive will need to mechanically fit (ie. no excessively large heatsink) and offer at least as much performance as Sony's custom internal SSD. The performance requirements mean no drive currently available at retail will qualify, but the situation will be very different next year.

200 Comments

View All Comments

eddman - Monday, June 15, 2020 - link

Yes, I added the CPU in the paths simply because the data goes through the CPU complex, but not necessarily through the cores."Data coming in from the SSD can be forwarded .... to the GPU (P2P DMA)"

You mean the data does not go through system RAM? The CPU still has to process the I/O related operations, right?

It seems nvidia has tackled this issue with a proprietary solution for their workstation products:

https://developer.nvidia.com/gpudirect

https://devblogs.nvidia.com/gpudirect-storage/

They talk about the data path between GPU and storage.

"The standard path between GPU memory and NVMe drives uses a bounce buffer in system memory that hangs off of the CPU.

GPU DMA engines cannot target storage. Storage DMA engines cannot target GPU memory through the file system without GPUDirect Storage.

DMA engines, however, need to be programmed by a driver on the CPU."

Maybe MS' DirectStorage is similar to nvidia's solution.

Oxford Guy - Monday, June 15, 2020 - link

"Consoles" are nothing more than artificial walled software gardens that exist because of consumer stupidity.They offer absolutely nothing the PC platform can't offer, via Linux + Vulkan + OpenGL.

Period.

Oxford Guy - Monday, June 15, 2020 - link

"but also going a step beyond the PC market to get the most benefit out of solid state storage."In order to justify their existence. Too bad it doesn't justify it.

It's more console smoke and mirrors. People fall for it, though.

Oxford Guy - Monday, June 15, 2020 - link

Consoles made sense when personal computer hardware was too expensive for just playing games, for most consumers.Back in the day, when real consoles existed, even computer expansion modules didn't take off. Why? Cost. Those "consoles" were really personal computers. All they needed was a keyboard, writable storage, etc. But, people didn't upgrade ANY console to a computer in large numbers. Even the NES had an expansion port on the bottom that sat unused. Lots of companies had wishful thinking about turning a console into a PC and some of them used that in marketing and were sued vaporware/inadequateanddelayedware (Intellivision).

Just the cost of adding a real keyboard was more than consumers were willing to pay. Even inexpensive personal computers (PCs!) had chicklet keyboards, like the Atari 400. That thing cost a lot to build because of the stricter EMI emissions standards of its time but Atari used a chicklet keyboard anyway to save money. Sinclair also used them. Many inexpensive "home" computers that had full-travel keyboards were so mushy they were terrible to use. Early home PCs like the VideoBrain highlight just how much companies tried to cut corners just on the keyboard.

Then, there is the writable storage. Cassettes were too slow and were extremely unreliable. Floppy drives were way too expensive for most PC consumers until the Apple II (where Wozniak developed a software controller to reduce cost a great deal vs. a mechanical one). They remained too expensive for gaming boxes, with the small exception of the shoddy Famicom FDS in Japan.

All of these problems were solved a long time ago. Writable storage is dirt cheap. Keyboards are dirt cheap. Full-quality graphics display hardware is dirt cheap (as opposed to the true console days when a computer with more pixels/characters would cost a bundle and "consoles" would have much less resolution).

The only thing remaining is the question: "Is the PC software ecosystem good enough". The answer was a firm no when it was Windows + DirectX. Now that we have Vulkan, though, there is no need for DirectX. Developers can use either the low-latency lower-level Vulkan or the high-level OpenGL, depending upon their needs for specific titles. Consumers and companies don't have to pay the Microsoft tax because Linux is viable.

There literally is no credible justification for the existence of non-handheld "consoles" anymore. There hasn't been for some time now. The hardware is the same. In the old days a console would have much less RAM memory, due to cost. It would have much lower resolution, typically, due to cost. It wouldn't have high storage capacity, due to cost.

All of that is moot. There is NOT ONE IOTA of difference between today's "console" and a PC. The walled software garden can evaporate. All it takes is Dorothy to use her bucket of water instead of continuing to drink the Kool-Aid.

Oxford Guy - Monday, June 15, 2020 - link

Back in the day:A console had:

much lower-resolution graphics, designed for TV sets at low cost

much less RAM

no floppy drive

no keyboard

no hard disk

A quality personal computer had:

more RAM, plus expansion (except for Jobs perversities like the original Mac)

80 column character-based or, later, high-resolution bitmapped monitor graphics

(there were some home PCs that used televisions but had things like disk drives)

floppy drive support

hard disk support (except, again, for the first Mac, which was a bad joke)

a full-travel full-size non-mushy keyboard

expansion slots (typically — not the first Mac!)

an operating system and first-party software (both of which cost)

thick paperbook manuals

typically, a more powerful CPU (although not always)

Today:

A console has:

Nothing a PC doesn’t have except for a stupid walled software garden.

A PC has:

Everything a console has except for the ludicrous walled software garden, a thing that offers no added value for consumers — quite the opposite.

Oxford Guy - Monday, June 15, 2020 - link

The common claim that "consoles" of today offer more simplicity is a lie, too.In the true console days, you'd stick a cartridge in, turn on the power, and press start.

Today, just as with the "PC" (really the same thing) — you have a complex operating system that needs to be patched relentlessly. You have games that have to be patched relentlessly. You have microtransactions. You have log-ins/accounts and software stores. Literally, you have games on disc that you can't even play until you patch the software to be compatible with the latest OS DRM. Developers also helpfully use that as an opportunity to drastically change gameplay (as with PS3 Topspin) and you have no choice in the matter. Remember, it's always an "upgrade".

The hardware is identical. Even the controllers, once one of the few advantages of consoles (except for some, like the Atari 5200, which were boneheaded), are the same. They use the same USB ports and such. There is no difference. Even if there were, the rise of Chinese manufacturing and the Internet means you could get a cheap and effective adapter with minimal fuss.

You want fast storage so badly? You can get it on the PC. You want software that is honed to be fast and efficient? Easily done. It's all x86 stuff.

Give me justified elaborate custom chips (not frivolous garbage like Apple's T2), truly novel form factors that are needed for special gameplay, and things like that and then, maybe, you might be able to sell to people on the higher end of the Bell curve.

If I were writing an article on consoles I'd use a headline something like this: "Consoles of 2020: The SSD Speed Gimmick — Betting on the Bell Curve"

It would be bad enough if there were only one extra stupid walled garden (beyond Windows + DirectX). But to have three is even more irksome.

edzieba - Monday, June 15, 2020 - link

"partially resident textures"Megatexturing is back!

"The most interesting bit about DirectStorage is that Microsoft plans to bring it to Windows, so the new API cannot be relying on any custom hardware and it has to be something that would work on top of a regular NTFS filesystem. "

The latter does not imply the former. API support just means that the API calls will not fail. It doesn't mean they will be as fast as a system using dedicated hardware to handle those calls. Just like with DXR: you can easily support DXR calls on a GPU without dedicated BVH traversal hardware, they'll just be as slow as unaccelerated raytracing has always been.

Soft API support for DirectStorage makes sense to aid in Microsoft's quest for 'cross play' between PC and XboX. If the same API calls can be used for both developers are more likely to work into implementing DirectStorage. As long as DirectStorage doesn't have too large a penalty when used on PC without dedicated hardware, the reduction in dev overhead is attractive.

eddman - Monday, June 15, 2020 - link

"The latter does not imply the former. API support just means that the API calls will not fail. It doesn't mean they will be as fast as a system using dedicated hardware to handle those calls."True, but apparently nvidia's GPUDirect Storage, which enables direct transfer between GPU and storage, is a software only solution and doesn't require specialized hardware.

If that's the case, then there's a good chance MS' DirectStorage is a software solution too.

AFA I can tell, the custom I/O chips in XSX and PS5 are used for compressing the assets to increase the bandwidth, not enable direct GPU-to-storage access.

We'll know soon enough.

ichaya - Monday, June 15, 2020 - link

You have to ask: What is causing low FPS for current gen games? I think loading textures are by far the largest culprit, and even in cases where it's only a few levels or a few sections of a few levels, it does affect the overall immersion and playability of games where all of this storage tech should help.Oxford Guy - Monday, June 15, 2020 - link

I love how people forget how there is fast storage available on the "PC" (in quotes because, except for the Switch, these Sony/MS "consoles" are PCs with smoke and mirrors trickery to disguise that fact — the fact that all they are are stupidity taxes).Yes, stupidity taxes. That's exactly what "consoles" are, except for the Switch, which has a form factor that differs from PC.