AMD Zen 3 Ryzen Deep Dive Review: 5950X, 5900X, 5800X and 5600X Tested

by Dr. Ian Cutress on November 5, 2020 9:01 AM ESTSection by Andrei Frumusanu

The New Zen 3 Core: Front-End Updates

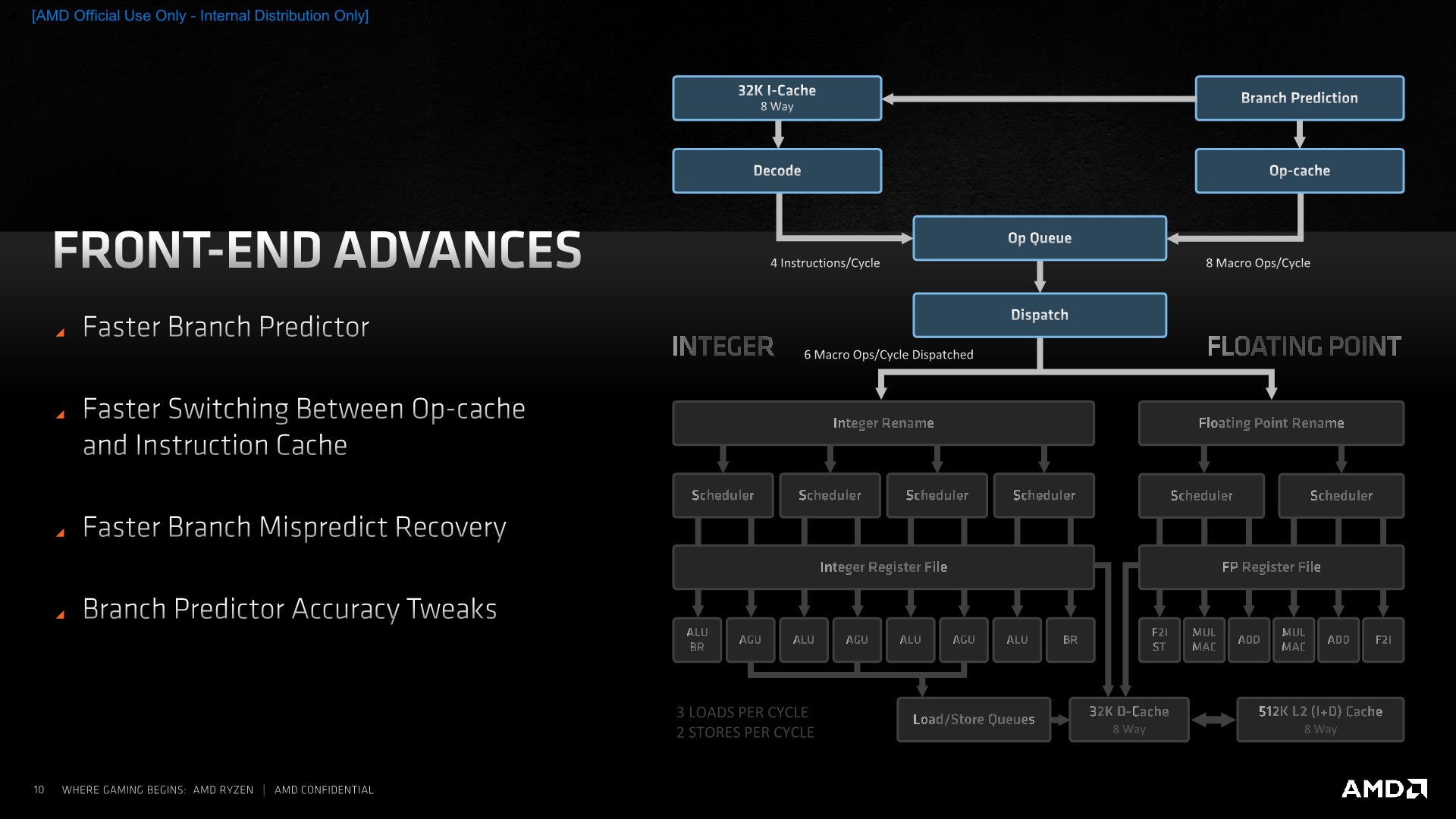

Moving on, let’s see what makes the Zen3 microarchitecture tick and how detail on how it actually improves things compared to its predecessor design, starting off with the front-end of the core which includes branch prediction, decode, the OP-cache path and instruction cache, and the dispatch stage.

From a high-level overview, Zen3’s front-end looks the same as on Zen2, at least from a block-diagram perspective. The fundamental building blocks are the same, starting off with the branch-predictor unit which AMD calls state-of-the-art. This feeds into a 32KB instruction cache which forwards instructions into a 4-wide decode block. We’re still maintaining a two-way flow into the OP-queue, as when we see instructions again which have been previously decoded, they are then stored in the OP-cache from which they can be retrieved with a greater bandwidth (8 Mops/cycle) and with less power consumption.

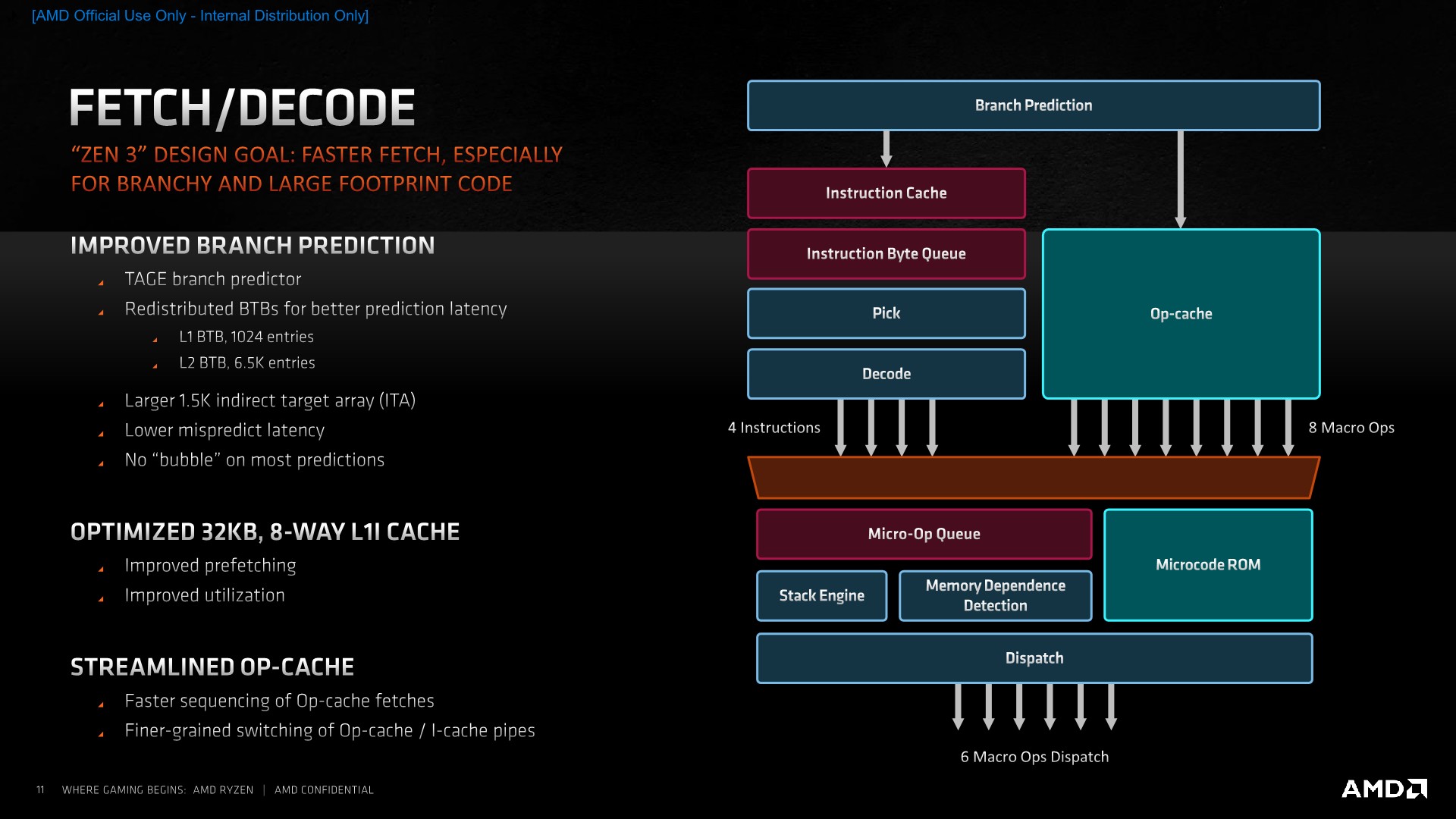

Improvements of the Zen3 cores in the actual blocks here include a faster branch predictor which is able to predict more branches per cycle. AMD wouldn’t exactly detail what this means but we suspect that this could allude to now two branch predictions per cycle instead of just one. This is still a TAGE based design as had been introduced in Zen2, and AMD does say that it has been able to improve the accuracy of the predictor.

Amongst the branch unit structure changes, we’ve seen a rebalancing of the BTBs, with the L1 BTB now doubling in size from 512 to 1024 entries. The L2 BTB has seen a slight reduction from 7K to 6.5K entries, but allowed the structure to be more efficient. The indirect target array (ITA) has also seen a more substantial increase from 1024 to 1536 entries.

If there is a misprediction, the new design reduces the cycle latency required to get a new stream going. AMD wouldn’t exactly detail the exact absolute misprediction cycles or how faster it is in this generation, but it would be a more significant performance boost to the overall design if the misprediction penalty is indeed reduced this generation.

AMD claims no bubbles on most predictions due to the increased branch predictor bandwidth, here I can see parallels to what Arm had introduced with the Cortex-A77, where a similar doubled-up branch predictor bandwidth would be able to run ahead of subsequent pipelines stages and thus fill bubble gaps ahead of them hitting the execution stages and potentially stalling the core.

On the side of the instruction cache, we didn’t see a change in the size of the structure as it’s still a 32KB 8-way block, however AMD has improved its utilisation. Prefetchers are now said to be more efficient and aggressive in actually pulling data out of the L2 ahead of them being used in the L1. We don’t know exactly what kind of pattern AMD alludes to having improved here, but if the L1I behaves the same as the L1D, then adjacent cache lines would then be pulled into the L1I here as well. The part of having a better utilisation wasn’t clear in terms of details and AMD wasn’t willing to divulge more, but we suspect a new cache line replacement policy to be a key aspect of this new improvement.

Being an x86 core, one of the difficulties of the ISA is the fact that instructions are of a variable length with encoding varying from 1 byte to 15 bytes. This has been legacy side-effect of the continuous extensions to the instruction set over the decades, and as modern CPU microarchitectures become wider in their execution throughput, it had become an issue for architects to design efficient wide decoders. For Zen3, AMD opted to remain with a 4-wide design, as going wider would have meant additional pipeline cycles which would have reduced the performance of the whole design.

Bypassing the decode stage through a structure such as the Op-cache is nowadays the preferred method to solve this issue, with the first-generation Zen microarchitecture being the first AMD design to implement such a block. However, such a design also brings problems, such as one set of instructions residing in the instruction cache, and its target residing in the OP-cache, again whose target might again be found in the instruction cache. AMD found this to be a quite large inefficiency in Zen2, and thus evolved the design to better handle instruction flows from both the I-cache and the OP-cache and to deliver them into the µOP-queue. AMD’s researchers seem to have published a more in-depth paper addressing the improvements.

On the dispatch side, Zen3 remains a 6-wide machine, emitting up to 6-Macro-Ops per cycle to the execution units, meaning that the maximum IPC of the core remains at 6. The Op-cache being able to deliver 8 Macro-Ops into the µOp-queue would serve as a mechanism to further reduce pipeline bubbles in the front-end – as the full 8-wide width of that structure wouldn’t be hit at all times.

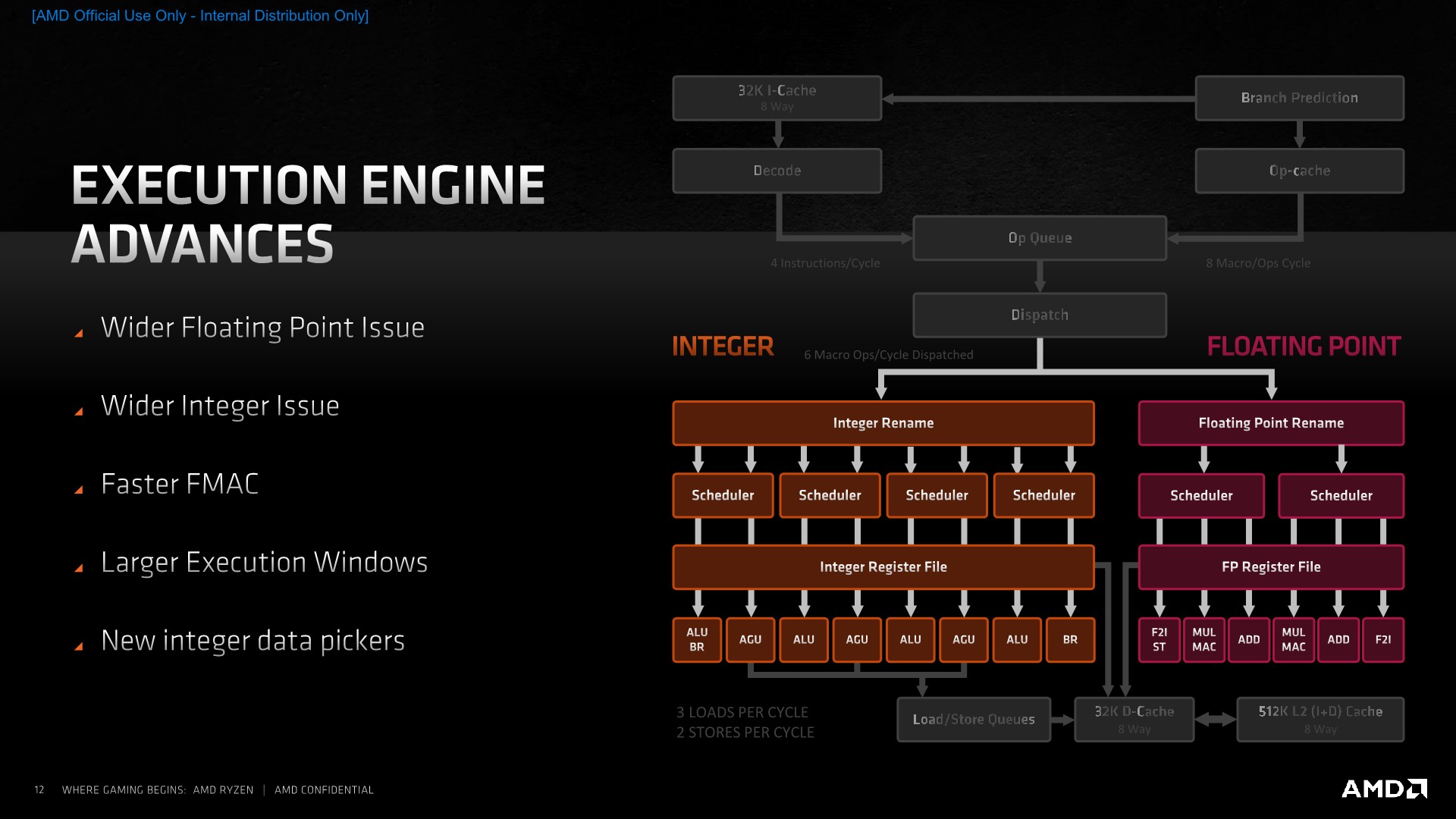

On the execution engine side of things, we’ve seen a larger overhaul of the design as the Zen3 core has seen a widening of both the integer and floating-point issue width, with larger execution windows and lower latency execution units.

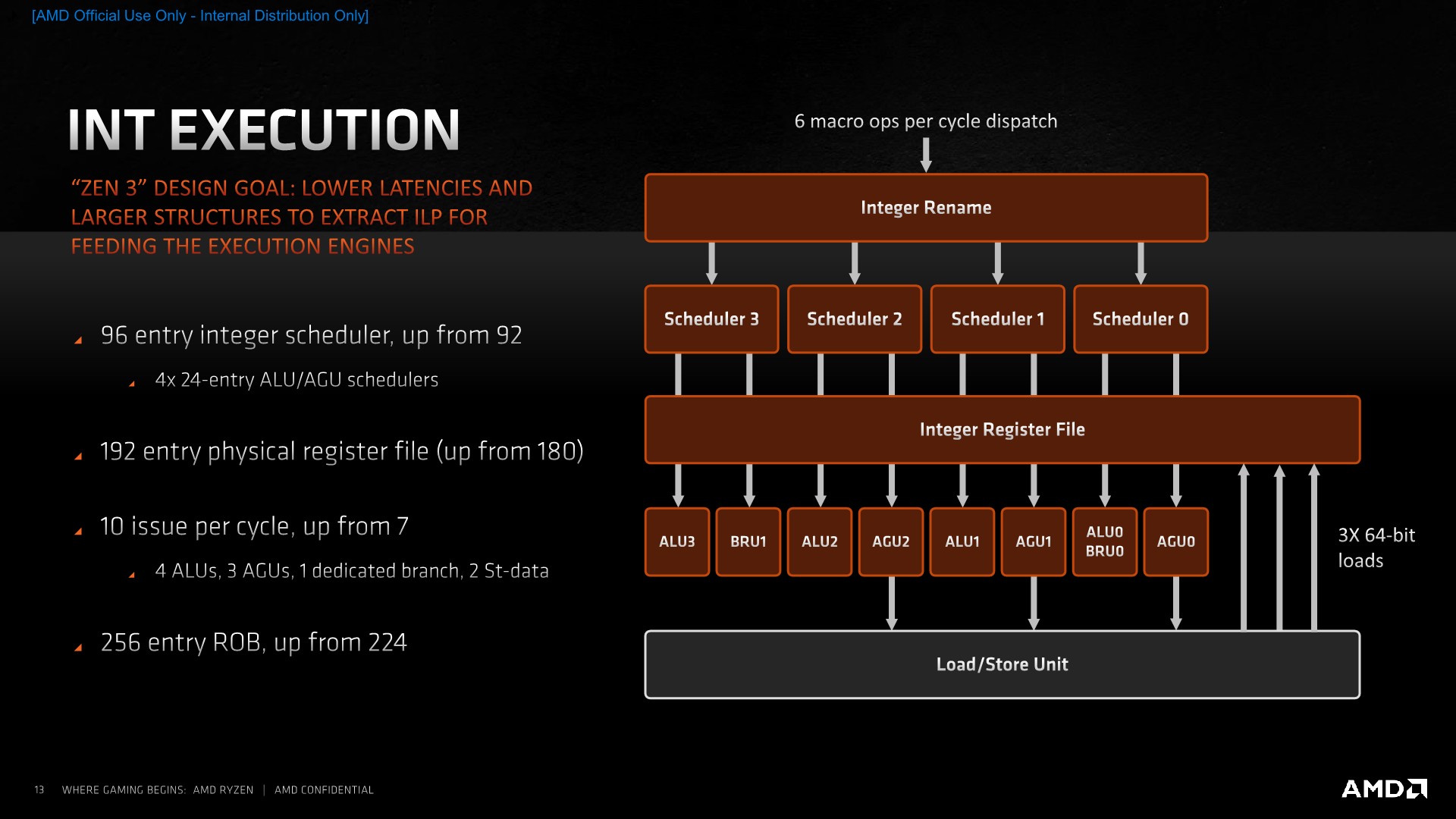

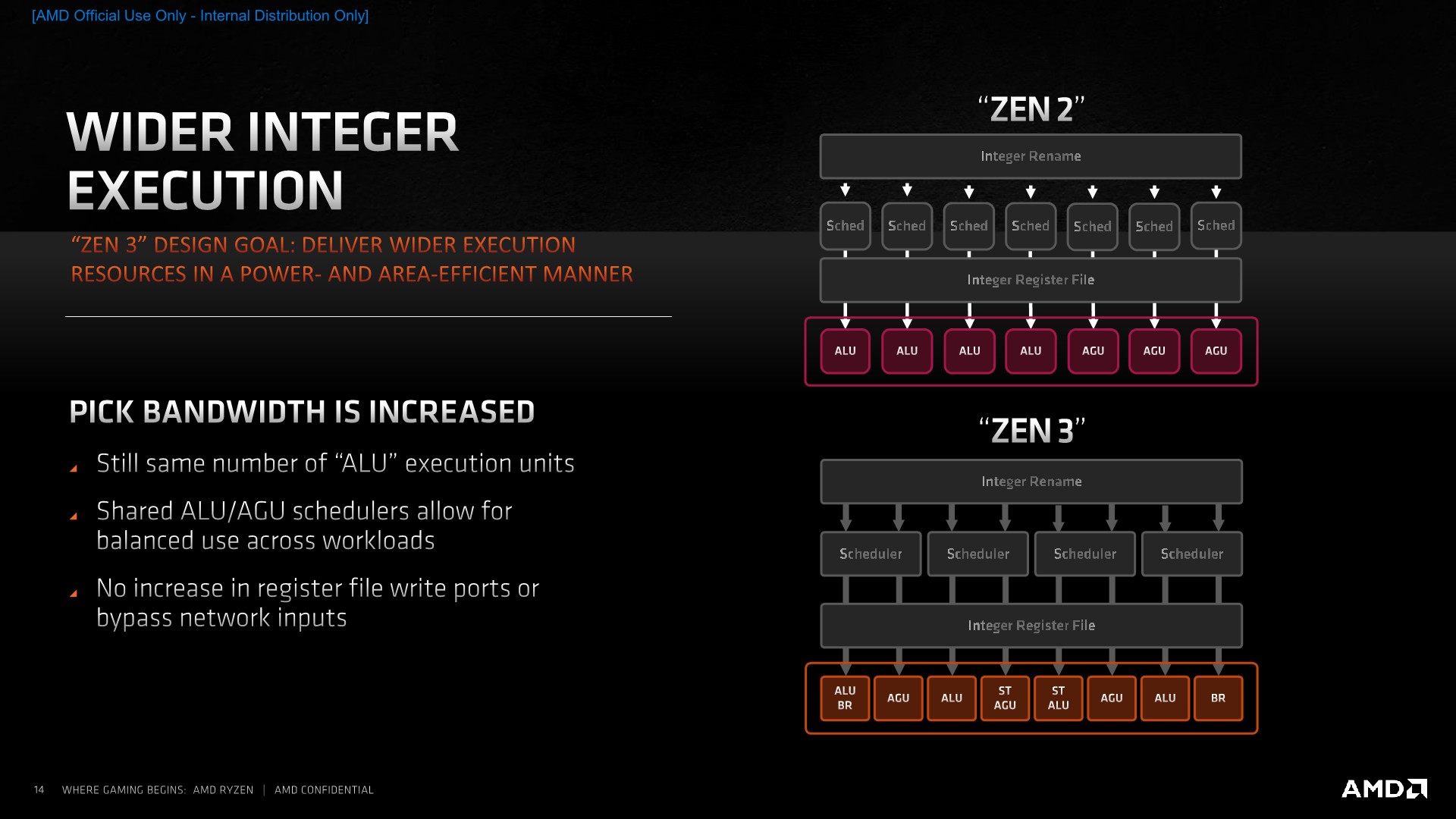

Starting off in more detail on the integer side, the one larger change in the design has been a move from individual schedulers for each of the execution units to a more consolidated design of four schedulers issuing into two execution units each. These new 24-entry schedulers should be more power efficient than having separate smaller schedulers, and the entry capacity also grows slightly from 92 to 96.

The physical register file has seen a slight increase from 180 entries to 192 entries, allowing for a slight increase in the integer OOO-window, with the actual reorder-buffer of the core growing from 224 instructions to 256 instructions, which in the context of competing microarchitectures such as Intel’s 352 ROB in Sunny Cove or Apple giant ROB still seems relatively small.

The overall integer execution unit issue width has grown from 7 to 10. The breakdown here is that while the core still has 4 ALUs, we’ve now seen one of the branch ports separate into its own dedicated unit, whilst the other unit still shares the same port as one of the ALUs, allowing for the unshared ALU to dedicate itself more to actual arithmetic instructions. Not depicted here is an additional store unit, as well as a third load unit, which is what brings us to 10 issue units in total on the integer side.

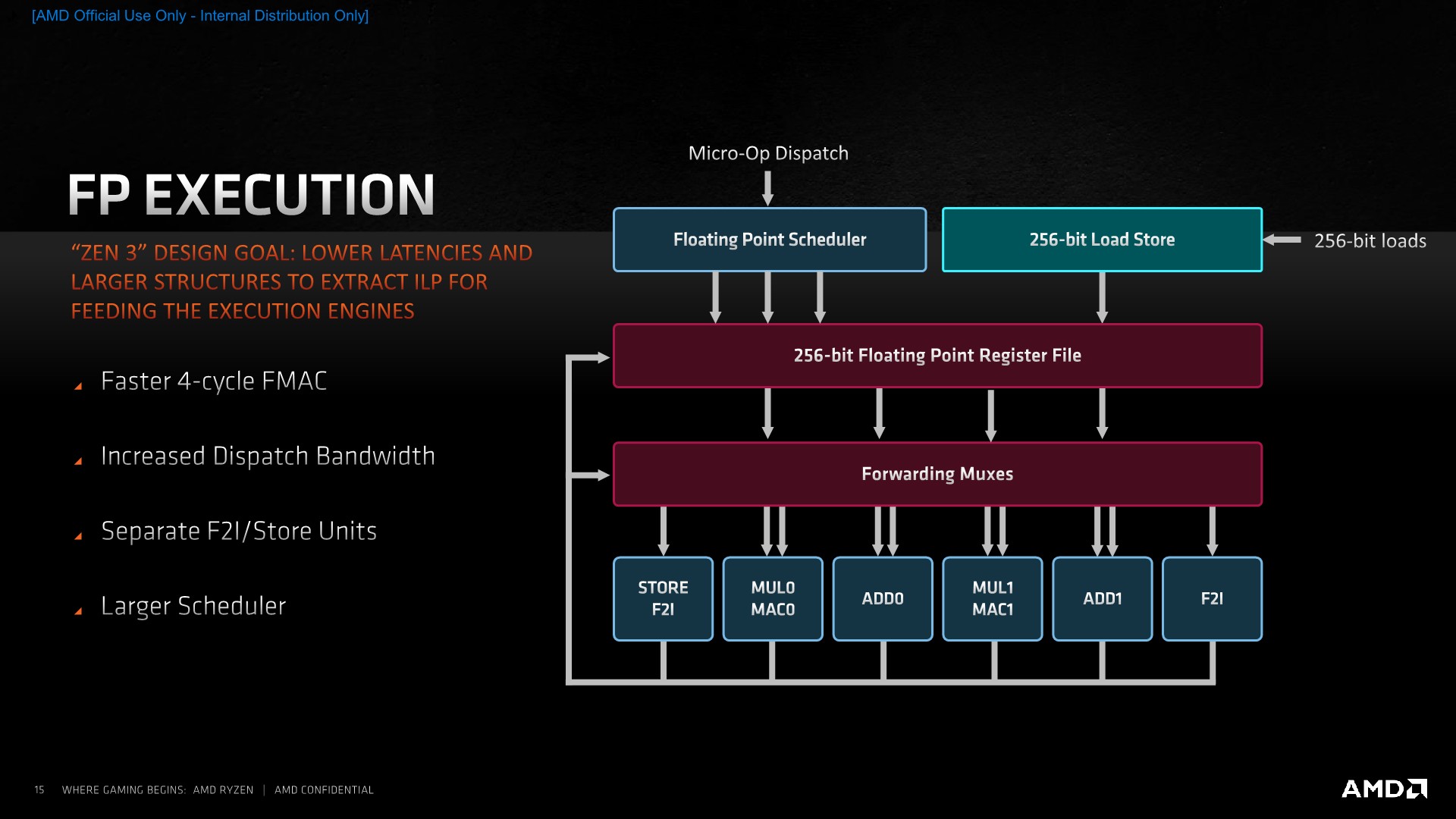

On the floating-point side, the dispatch width has been increased from 4 µOps to 6 µOps. Similar to the integer pipelines, AMD has opted to disaggregate some of the pipelines capabilities, such as moving the floating point store and floating-point-to-integer conversion units into their own dedicated ports and units, so that the main execution pipelines are able to see higher utilisation with actual compute instructions.

One of the bigger improvements in the instruction latencies has been the shaving off of a cycle from 5 to 4 for fused multiply accumulate operations (FMAC). The scheduler on the FP side has also seen an increase in order to handle more in-flight instructions as loads on the integer side are fetching the required operands, although AMD here doesn’t disclose the exact increases.

339 Comments

View All Comments

Orkiton - Thursday, November 5, 2020 - link

Please Anandtech, up ryzen and up epic your servers, it's ages here to load a page...PrionDX - Thursday, November 5, 2020 - link

Mmm nice warm code bath, very relaxing> Results for Cinebench R20 are not comparable to R15 or older, because both the scene being used is different, but also the updates in the code bath.

prophet001 - Thursday, November 5, 2020 - link

How you gonna test FFXIV and not WoW.-______________-

Mr Perfect - Thursday, November 5, 2020 - link

Guys, could you please define acronyms the first time they are used in an article? Take page three for example, it touches on a BTB, TAGE and ITA, but only ITA is defined. I have no idea what a BTB or TAGE is. If they where defined on page two and I missed them, feel free to ignore me.name99 - Saturday, November 7, 2020 - link

BTB= Branch Target Buffer. Holds the addresses where a branch will go if it taken.TAGE= (tagged geometric something-or-other) name is not important; what matters is that it's currently the most accurate known branch predictor. Comes in a few variants, was 1st published around 2007 by Andre Seznec who has since gone on to show how it can be used for damn well everything! (Value prediction, indirect branch, prefetching, washing windows, you name it.)

Apple seems to have been first to implement, maybe as early as the A7, certainly very soon in the A series.

Now everybody uses it, but only in the last year or so has everyone really got on board. (Actually to be precise Seznec suggested that Intel is using TAGE based on their performance characteristics, but I don't think Intel have confirmed this. And ARM probably are but again unconfirmed. IBM is confirmed, and now AMD.)

Even if you know the basic algorithm for direction prediction is TAGE, that still doesn't make everyone equal. There are MANY extra aspects where everyone is different. The most obvious is how much storage is given to the branch predictor, but other less obvious aspects include

- how do you predict indirect branches? State of the art is ITTAGE, but that doesn't mean everyone is using it.

- how do you update your branch prediction storage (ie how fast do corrections get from the backend into the predicting mechanism at the front end)

- how do you implement your L2 storage and second-stage prediction?

- what extra "specialist" predictors do you have? (These are things like special-case predictors for loops.)

quantcon - Thursday, November 5, 2020 - link

Yeah, it's actually kinda nuts, considering Intel convinced us years ago that we've hit the point of diminishing returns and there are hardly any IPC improvements to be had.Spunjji - Sunday, November 8, 2020 - link

Seems like they needed to believe that...DanD85 - Thursday, November 5, 2020 - link

This just goes on to prove yet again how crucial a healthy competition benefits everyone. Intel has been stagnating for more than a decade. Imagine where we would have been performance-wise if we had got this ~40% increase every 3 years. Intel only have themselves to blame. They are the chipzillla, the gatekeeper and the choker of the whole industry!lmcd - Monday, November 9, 2020 - link

40% is a bit disingenuous. Most of the gap in desktop is chiplet design. Notice how mobile, while AMD-favored, is still competitive? It's just a bad bet from Intel going with stacked packaging before same-package flat chiplet, and the packaging techniques for both are very new. There aren't 40% improvements on the table going forward, Bulldozer and Piledriver were both just awful and AMD didn't ever release full desktop Steamroller or Excavator (which were fine, not great). Zen 1 left a lot on the table for such a big increase as well.GeoffreyA - Tuesday, November 10, 2020 - link

If you place Zen at Haswell's level, it took AMD three years to reach Zen 3 (from the consumer's point of view). On Intel's side, it's taken six years to go from Haswell to Sunny Cove.Even in the early tick-tock days, when more massive changes could be put in, it was usually two years apart for microarchitecture: Core (2006), Nehalem (2008), Sandy Bridge (2010/11), etc.

Whether there's a lot more juice in the tank for Zen remains to be seen. In my opinion I think there is: Z3's out-of-order structures are still quite conservative, compared to Sunny Cove, which it beats, so there's possibility of more widening there. I also think their split scheduler design, inherited from the Athlon, will allow them to scale more easily. Of course, I know the engineers in Haifa must be cooking up something potent too. Either way, exciting stuff.