AMD EPYC Milan Review Part 2: Testing 8 to 64 Cores in a Production Platform

by Andrei Frumusanu on June 25, 2021 9:30 AM ESTTest Bed and Setup - Compiler Options

For the rest of our performance testing, we’re disclosing the details of the various test setups:

AMD - Dual EPYC 7763 / 75F3 / 7443 / 7343 / 72F3

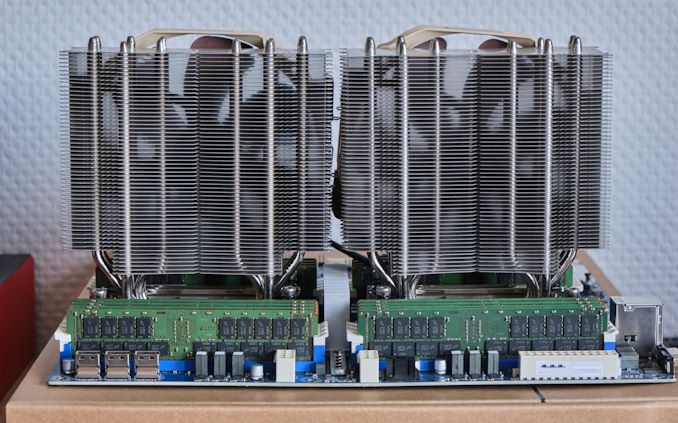

For today’s review in terms of now performance figure, we’re now using GIGABYTE’s new MZ72-HB0 rev.3.0 board as the primary test platform for the EPYC 7763, 75F3, 7443, 7343 and 72F3. The system is running under full default settings, meaning performance or power determinism as configured by AMD in their default SKU fuse settings.

| CPU | 2x AMD EPYC 7763 (2.45-3.500 GHz, 64c, 256 MB L3, 280W) / 2x AMD EPYC 75F3 (3.20-4.000 GHz, 32c, 256 MB L3, 280W) / 2x AMD EPYC 7443 (2.85-4.000 GHz, 24c, 128 MB L3, 200W) / 2x AMD EPYC 7343 (3.20-3.900 GHz, 16c, 128 MB L3, 190W) / 2x AMD EPYC 72F3 (3.70-4.100 GHz, 8c, 256MB L3, 180W) |

| RAM | 512 GB (16x32 GB) Micron DDR4-3200 |

| Internal Disks | Crucial MX300 1TB |

| Motherboard | GIGABYTE MZ72-HB0 (rev. 3.0) |

| PSU | EVGA 1600 T2 (1600W) |

Software wise, we ran Ubuntu 20.10 images with the latest release 5.11 Linux kernel. Performance settings both on the OS as well on the BIOS were left to default settings, including such things as a regular Schedutil based frequency governor and the CPUs running performance determinism mode at their respective default TDPs unless otherwise indicated.

AMD - Dual EPYC 7713 / 7662

Due to not having access to the 7713 for this review, we’re picking up the older test numbers of the chip on AMD’s Daytona platform. We also tested the Rome EPYC 7662 – these latter didn’t exhibit any issues in terms of their power behaviour.

| CPU | 2x AMD EPYC 7713 (2.00-3.365 GHz, 64c, 256 MB L3, 225W) / 2x AMD EPYC 7662 (2.00-3.300 GHz, 64c, 256 MB L3, 225W) |

| RAM | 512 GB (16x32 GB) Micron DDR4-3200 |

| Internal Disks | Varying |

| Motherboard | Daytona reference board: S5BQ |

| PSU | PWS-1200 |

AMD - Dual EPYC 7742

Our local AMD EPYC 7742 system, due to the aforementioned issues with the Daytona hardware, is running on a SuperMicro H11DSI Rev 2.0.

| CPU | 2x AMD EPYC 7742 (2.25-3.4 GHz, 64c, 256 MB L3, 225W) |

| RAM | 512 GB (16x32 GB) Micron DDR4-3200 |

| Internal Disks | Crucial MX300 1TB |

| Motherboard | SuperMicro H11DSI0 |

| PSU | EVGA 1600 T2 (1600W) |

As an operating system we’re using Ubuntu 20.10 with no further optimisations. In terms of BIOS settings we’re using complete defaults, including retaining the default 225W TDP of the EPYC 7742’s, as well as leaving further CPU configurables to auto, except of NPS settings where it’s we explicitly state the configuration in the results.

The system has all relevant security mitigations activated against speculative store bypass and Spectre variants.

Intel - Dual Xeon Platinum 8380

For our new Ice Lake test system based on the Whiskey Lake platform, we’re using Intel’s SDP (Software Development Platform 2SW3SIL4Q, featuring a 2-socket Intel server board (Coyote Pass).

The system is an airflow optimised 2U rack unit with otherwise little fanfare.

Our review setup solely includes the new Intel Xeon 8380 with 40 cores, 2.3GHz base clock, 3.0GHz all-core boost, and 3.4GHz peak single core boost. That’s unusual about this part as noted in the intro, it’s running at a default 205W TDP which is above what we’ve seen from previous generation non-specialised Intel SKUs.

| CPU | 2x Intel Xeon Platinum 8380 (2.3-3.4 GHz, 40c, 60MB L3, 270W) |

| RAM | 512 GB (16x32 GB) SK Hynix DDR4-3200 |

| Internal Disks | Intel SSD P5510 7.68TB |

| Motherboard | Intel Coyote Pass (Server System S2W3SIL4Q) |

| PSU | 2x Platinum 2100W |

The system came with several SSDs including Optane SSD P5800X’s, however we ran our test suite on the P5510 – not that we’re I/O affected in our current benchmarks anyhow.

As per Intel guidance, we’re using the latest BIOS available with the 270 release microcode update.

Intel - Dual Xeon Platinum 8280

For the older Cascade Lake Intel system we’re also using a test-bench setup with the same SSD and OS image as on the EPYC 7742 system.

Because the Xeons only have 6-channel memory, their maximum capacity is limited to 384GB of the same Micron memory, running at a default 2933MHz to remain in-spec with the processor’s capabilities.

| CPU | 2x Intel Xeon Platinum 8280 (2.7-4.0 GHz, 28c, 38.5MB L3, 205W) |

| RAM | 384 GB (12x32 GB) Micron DDR4-3200 (Running at 2933MHz) |

| Internal Disks | Crucial MX300 1TB |

| Motherboard | ASRock EP2C621D12 WS |

| PSU | EVGA 1600 T2 (1600W) |

The Xeon system was similarly run on BIOS defaults on an ASRock EP2C621D12 WS with the latest firmware available.

Ampere "Mount Jade" - Dual Altra Q80-33

The Ampere Altra system we’re using the provided Mount Jade server as configured by Ampere. The system features 2 Altra Q80-33 processors within the Mount Jade DVT motherboard from Ampere.

In terms of memory, we’re using the bundled 16 DIMMs of 32GB of Samsung DDR4-3200 for a total of 512GB, 256GB per socket.

| CPU | 2x Ampere Altra Q80-33 (3.3 GHz, 80c, 32 MB L3, 250W) |

| RAM | 512 GB (16x32 GB) Samsung DDR4-3200 |

| Internal Disks | Samsung MZ-QLB960NE 960GB Samsung MZ-1LB960NE 960GB |

| Motherboard | Mount Jade DVT Reference Motherboard |

| PSU | 2000W (94%) |

The system came preinstalled with CentOS 8 and we continued usage of that OS. It’s to be noted that the server is naturally Arm SBSA compatible and thus you can run any kind of Linux distribution on it.

The only other note to make of the system is that the OS is running with 64KB pages rather than the usual 4KB pages – this either can be seen as a testing discrepancy or an advantage on the part of the Arm system given that the next page size step for x86 systems is 2MB – which isn’t feasible for general use-case testing and something deployments would have to decide to explicitly enable.

The system has all relevant security mitigations activated, including SSBS (Speculative Store Bypass Safe) against Spectre variants.

The system has all relevant security mitigations activated against the various vulnerabilities.

Compiler Setup

For compiled tests, we’re using the release version of GCC 10.2. The toolchain was compiled from scratch on both the x86 systems as well as the Altra system. We’re using shared binaries with the system’s libc libraries.

58 Comments

View All Comments

Andrei Frumusanu - Friday, June 25, 2021 - link

Those results don't contradict anything I'm saying. Given a normalised throughput performance of the socket, for example here where the 16- and 24- core Milan equals or beats the 28-core ICL-SP in many workloads, the Xeon still handily beats those Milan parts in transactional workloads. The 40-core Xeon has 77% of the jbb performance of the 64-core EPYC even though in the int suite it's only at 60%. Those particular STH results work out because the 7543P is $1000 cheaper than the 7543, but for the SKUs we had in today, Intel still is on equal footing in terms of DB performance value.Cllaymenn - Friday, June 25, 2021 - link

Whatever one says about some insignificant single anomaly in some DB test... The fact is that ANY company, from small to large, any corporation needing power, any data centre, hosting, cloud computing, research institutes, universities, will choose EPYC on ZEN3 over even the 8320, because it will allow them to compute faster, make more money per month, and less stress for administrators when there are higher network loads, clouds because AMD will "grind" / process faster the requests/needs of thousands of of thousands of clients simultaneously using servers, because in addition to more compute power has more bandwidth AMD platform especially with 256 threads and 8 channel memory and fast Infinity Fabric and many of the ZEN3 optimizations... and is more flexible (harder to clog or jam Zen2/Zen3 from what I've noticed. ) These processors grind through anything you throw at them without any breathlessness.schujj07 - Friday, June 25, 2021 - link

While Spec is an "industry standard" benchmark, vendors spend hours optimizing for their servers to look better. Therefore as an administrator and designer of a high performance data-center I personally look at Spec results with a grain of salt. For example, Super Micro submitted data for 2 of their A+ AS-1124US-TNRP with dual 75F3 on April 26, 2021. One system has max-jOPS of 276,317 and critial-jOPS of 116,628. The other has a score of 211,179 max-jOPS & 191,813 critical-jOPS. They also have 2 X12DPG-QT6 with dual 8380's and one has scores of 272,500 for max-jOPS & 147,409 for critical-jOPS. The other has scores of 258,368 for max-jOPS & 201,334 for critical-jOPS. In these cases the 75F3 with few cores and threads ends up in a virtual tie with the 8380 in the transactional workload for one of the results, but the second result in the database is a 22-30% lower based on comparison systems. https://www.spec.org/jbb2015/results/res2021q2/Depending on the results you want, the 75F3 is a much better value or of equal value to the 8380. I think now you can see why I take Spec with a grain of salt on their results. Globally saying that Milan has issues in transactional DBs based solely on Spec results isn't a good idea. While I know it is the benchmarks that you choose as they are "industry standard," I think it would be worth while to invest in creating an actual real world scenario DB benchmark that doesn't use Spec.

Andrei Frumusanu - Friday, June 25, 2021 - link

> One system has max-jOPS of 276,317 and critial-jOPS of 116,628. The other has a score of 211,179 max-jOPS & 191,813 critical-jOPS.Which generally makes submitted scores not very useful, we're using apples-to-apples runs here, and while you can argue they're not as optimised, they're comparable to each other.

And I also never said that Milan has *issues*, I'm simply saying that compared to other workloads where there's a massive performance lead for AMD, Intel is still competitive, a view that falls in line with many industry customers.

Cllaymenn - Friday, June 25, 2021 - link

We know that Intel watches the Anandtech website, and that you are aware of this, they also send you expensive hardware for testing, and hope that the results will be more favourable to their new development (e.g. 8320) which they have been working on for a long time. I think it would be unpleasant and uncomfortable to criticise their new products harshly if I were writing a review, but I would rather gently point out which is good at what, which is leading and which still needs to catch up. Because of the awareness of the efforts of hundreds or even thousands of Intel engineers I would not have the heart to criticize their new product, or sharply, clearly say who wins everything and the rest can hide. I know that even the engineers, designers and CPU architects like to read about their new baby after work, and they go to sites like Anandtech with enthusiasm and quiet hope that they have made a better impression on the reviewer and readers, than their previous older products, that we have noticed a significant difference, jump in performance and that it has been appreciated and maybe there will be some nice, positive comments, feedback. It probably gives them a lot of happiness to see people out there enjoying the results of their hard work and another success for the company. Because the 8320 was a huge challenge for these people, it's a brand new fresh 10nm SuperFin technology and a mega monolithic 40 core big piece of silicon. And it works! It may not catch up with the 64 core competition but it's still a huge step forward for them, reaching a significant milestone. Once they mastered this SuperFin 10nm technology to create monolithic 40 core chips they now have a lot of experience and know how to do it even better, especially in a modular architecture where the silicon pieces will be smaller. Many of the threads stem from the creation of the Xeon 8320, so I understand the reviewer's attitude of appreciating the level of technology, sophistication, and performance of their new design. (sorry for some grammatical errors, I'm still improving)bwhitty - Friday, June 25, 2021 - link

Can't tell if you're very subtly implying Andrei is coloring the results in favor of Intel? Perhaps you're not, but anyways it doesn't seem he is. Other than that, I agree thatSmall correction: Ice Lake is on 10nm+, not Super Fin. Tiger Lake is 10SF (10++), and Sapphire Rapids will be on 10 Enhance Super Fin, so 10nm+++.

Tangent: I think that Ice Lake being on the non-SF process actually bodes extremely well for Sapphire Rapids because Ice Lake even in laptops is just not that good from a mfg perspective. It's basically Intel 10nm's first shippable and salvaged process. Super Fin appears far, far better in Tiger Lake versus Ice Lake, and so an improvement on top of that thusly should perhaps finally bring Intel's mfg in line with TSMC 7nm. That gives Sapphire Rapids a good place to be in the first half of 2022 until Genoa rolls out on TSMC 5nm is late 2022 / early 2023.

Cllaymenn - Friday, June 25, 2021 - link

bwhitty. I did not mean favoring Intel products, but a more subdued way of speaking about their performance in relation to ZEN3, a way other than the popular Linus on YT, which is sharply pressing Intel with each premiere of new AMD products.As for Super Fin, I read about it recently in one of the popular IT websites. I typed in google and found a quote

"Intel Xeon Scalable Ice Lake-SP processors were announced some time ago, but we had to wait a while for their premiere. We finally got it - we got to know the technical details of the units, as well as their performance results. Intel Xeon Scalable units (Ice Lake-SP) use the new Sunny Cove microarchitecture, which is expected to translate into up to a 20% increase in IPC over the previous generation Skylake. The chipsets are manufactured using a new 10nm SuperFin process.

As I checked with a few other sources, I now know that this site was wrong about the 83xx series.

Ian Cutress - Friday, June 25, 2021 - link

On 10nm naming, Intel has changed it twice. There are no + or ++ any more.https://www.anandtech.com/show/16107/what-products...

bwhitty - Monday, June 28, 2021 - link

Oh yes, Dr Cutress, I know all these Intel mfg node specifics purely from Anandtech’s breakdownsoutsideloop - Friday, June 25, 2021 - link

Far, far better? Tiger Lake H still sucks power like an anebriated Cleopatra.