Intel Alder Lake DDR5 Memory Scaling Analysis With G.Skill Trident Z5

by Gavin Bonshor on December 23, 2021 9:00 AM ESTG.Skill Trident Z5 Memory (F5-6000U3636E16G)

2x16GB of DDR5-6000 CL36

For the purposes of this article and to investigate scaling performance on Alder Lake, G.Skill supplied us with a kit of its latest Trident Z5 DDR5-6000 CL36 memory. The G.Skill Trident Z series has been its flagship model for many years, focusing on performance but blending in a premium and clean-cut aesthetic. G.Skill offers two types of its Trident Z5 memory, some without RGB LEDs such as the kit we are taking a look at today (Z5), and the Trident Z5 RGB, which includes an RGB LED light bar along the top of each memory stick.

Focusing on the non-RGB variants, the G.Skill Trident Z D5 is available in various 32 GB (2x16) configurations starting at DDR5-5600 CL36 and ranging up to DDR5-6000 CL36. G.Skill unveiled a kit of Trident Z D5 RGB DDR5-7000 CL40 kit, which is extremely fast, and when it is released, it will ultimately be one of the most, if not the most, expensive DDR5 memory kit on the market.

Looking at the design, the G.Skill Trident Z5 DDR5 memory uses a 42 mm tall (at the highest point) heatsink, with G.Skill offering a two-tone contrasting matte black kit, as well as a black and metallic silver kit. The kit supplied to us by G.Skill uses two-tone matte black heatsinks. The heatsinks are constructed from aluminum, and G.Skill states that it uses a newer and more 'streamlined' design. There are quite pointy, which given previous G.Skill memory kits might have the tendency to feel too sharp when installing them.

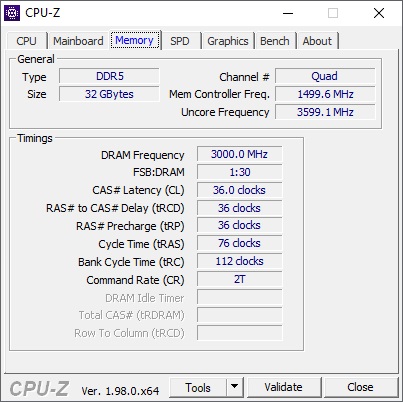

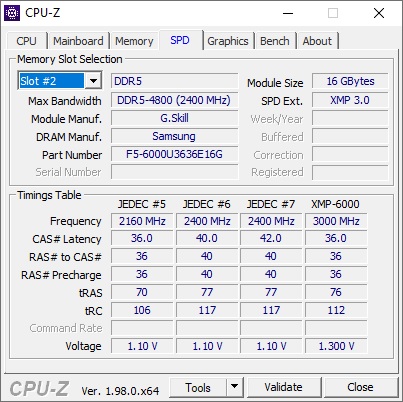

Looking at what CPU-Z is reporting, we can see that the X.M.P 3.0 profile matches up with the advertised specifications, with this particular kit using DDR5-6000 with latency timings of 36-36-36-76. The operating voltage for the kit is 1.3 V, which is a 0.2 V bump from the JEDEC SPD rating of this kit, which is DDR5-4800 at 1.1 V.

Checking the more intricate details of the G.Skill Trident Z5 DDR5-6000 memory, CPU-Z reports that the kit is using Samsung IC's, with a 1Rx8 array of 16 Gb ICs employed on each module. While CPU-Z doesn't actually report this, we reached out to G.Skill who informed us that this kit uses a single rank design.

82 Comments

View All Comments

TheinsanegamerN - Tuesday, December 28, 2021 - link

What is the hold up on video card reviews? I know there was that cali fire last year, but that was over a year ago now.gagegfg - Thursday, December 30, 2021 - link

The shortage of Chips is global and not just GPU. A Hardware tester like you, should not have problems in acquiring a GPU to do these tests, otherwise, you have a serious public relations problem.And returning to the point of my criticism, I think that those who understand why CPU scaling is tested in games, it is not only FPS, but also being able to evaluate longevity to upgrade to future GPUs or which CPU will generate a bottleneck sooner. that other. Today all CPUs can run games at 4k and that's not news to anyone.

In short, if you cannot get a decent GPU to test a top-of-the-range CPU and its limitations with different hardware combinations, try to eliminate the GPU bottleneck with 720p / 1080p resolutions or by dropping detail from excene. That is the correct way to test the other points and not the GPU itself.

This criticism is constructive, and is not intended to generate repudiation.

TheinsanegamerN - Monday, January 3, 2022 - link

Erm, you do know that other reviewers have been able to get ahold of GPUs for testing, right? If you dont have the budget for the stuff you need to do your job, cant get the stuff you need to do your job, and find excuses to now do the reviews your viewerbase wants to so, that says to a lot of people that anandtech is being mismanaged into the ground.Come to think of it, these same excuses were used when the 3080 was never reviewed, alongside "well we have one guy who does it and he lives near the fires in cali". That was a year and a half ago.

Perhaps Anandtech presenting excuses instead of articles is why you cant get companies to send you hardware? Just a thought.

Azix - Monday, January 10, 2022 - link

I can understand manufacturers being less likely to send out a GPU if they aren't guaranteed publicity. The key is that he said just for testing, not necessarily for a review. Most other reviewers are given for marketing purposes.zheega - Thursday, December 23, 2021 - link

I didn't even notice that at first, I just assumed that they would get rid of the GPU bottleneck. How amazingly weird.thestryker - Thursday, December 23, 2021 - link

The vast majority of people play at the highest playable resolution for the hardware they have which means they're GPU bound no matter what their GPU is. The frame rates in the review are perfectly playable and indicates the amount of variation one could expect for a mostly GPU bound situation. None of the titles are esports/competitive where you'd need to be maxing out frame rate so even if they were using a 3090 it'd be a pointless reflection for that.So while the metrics aren't perfect from a scaling under ideal circumstances perspective it's perfectly fine for practicality.

haukionkannel - Friday, December 24, 2021 - link

True. I have not latest PC hardware and still play at 1440p at highest settings. So I can confirm that is the way to test to see what we see in real world situation...Ooga Booga - Tuesday, December 28, 2021 - link

Because they haven't done meaningful GPU stuff in years, it all goes to Tom's Hardware. Eventually the card they use will be 10 years old if this site is even still around.TimeGoddess - Thursday, December 23, 2021 - link

If youre gonna use a gtx 1080 at least try and do the gaming benchmarks in 720p so that there is actually a CPU bottleneckIan Cutress - Thursday, December 23, 2021 - link

You know how many people complain when I run our CPU reviews at 720p resolutions? 'You're only doing that to show a difference'.