Micron's GDDR7 Chip Smiles for the Camera as Micron Aims to Seize Larger Share of HBM Market

by Anton Shilov on June 7, 2024 7:00 AM EST- Posted in

- Memory

- Micron

- Trade Shows

- GDDR7

- HBM3E

- Computex 2024

UPDATE 6/12: Micron notified us that it expects its HBM market share to rise to mid-20% in the middle of calendar 2025, not in the middle of fiscal 2025.

For Computex week, Micron was at the show in force in order to talk about its latest products across the memory spectrum. The biggest news for the memory company was that it has kicked-off sampling of it's next-gen GDDR7 memory, which is expected to start showing up in finished products later this year and was being demoed on the show floor. Meanwhile, the company is also eyeing taking a much larger piece of the other pillar of the high-performance memory market – High Bandwidth Memory – with aims of capturing around 25% of the premium HBM market.

GDDR7 to Hit the Market Later This Year

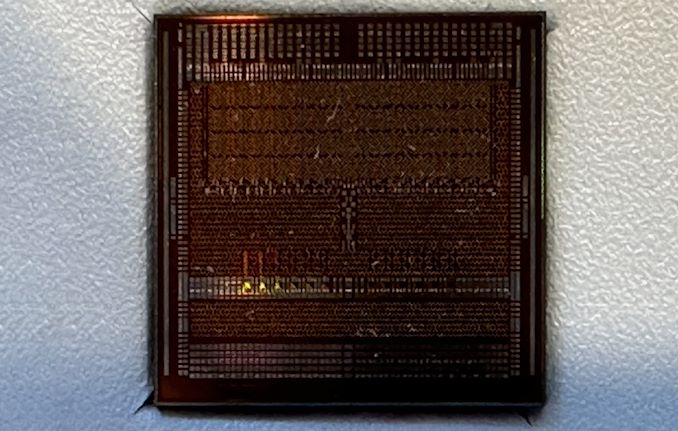

Micron's first GDDR7 chip is a 16 Gb memory device with a 32 GT/sec (32Gbps/pin) transfer rate, which is significantly faster than contemporary GDDR6/GDDR6X. As outlined with JEDEC's announcement of GDDR7 earlier this year, the latest iteration of the high-performance memory technology is slated to improve on both memory bandwidth and capacity, with bandwidths starting at 32 GT/sec and potentially climbing another 50% higher to 48 GT/sec by the time the technology reaches its apex. And while the first chips are starting off at the same 2GByte (16Gbit) capacity as today's GDDR6(X) chips, the standard itself defines capacities as high as 64Gbit.

Of particular note, GDDR7 brings with it the switch to PAM3 (3-state) signal encoding, moving from the industry's long-held NRZ (2-state) signaling. As Micron was responsible for the bespoke GDDR6X technology, which was the first major DRAM spec to use PAM signaling (in its case, 4-state PAM4), Micron reckons they have a leg-up with GDDR7 development, as they're already familiar with working with PAM.

The GDDR7 transition also brings with it a change in how chips are organized, with the standard 32-bit wide chip now split up into four 8-bit sub-channels. And, like most other contemporary memory standards, GDDR7 is adding on-die ECC support to hold the line on chip reliability (though as always, we should note that on-die ECC isn't meant to be a replacement for full, multi-chip ECC). The standard also implements some other RAS features such as error checking and scrubbing, which although are not germane to gaming, will be a big deal for compute/AI use cases.

The added complexity of GDDR7 means that the pin count is once again increasing as well, with the new standard adding a further 86 pins to accommodate the data transfer and power delivery changes, bringing it to a total of 266 pins. With that said, the actual package size is remaining unchanged from GDDR5/GDDR6, maintaining that familiar 14mm x 12mm package. Memory manufacturers are instead using smaller diameter balls, as well as decreasing the pitch between the individual solder balls – going from GDDR6's 0.75mm x 0.75mm pitch to a slightly shorter 0.75mm x 0.73mm pitch. This allows the same package to fit in another 5 rows of contacts.

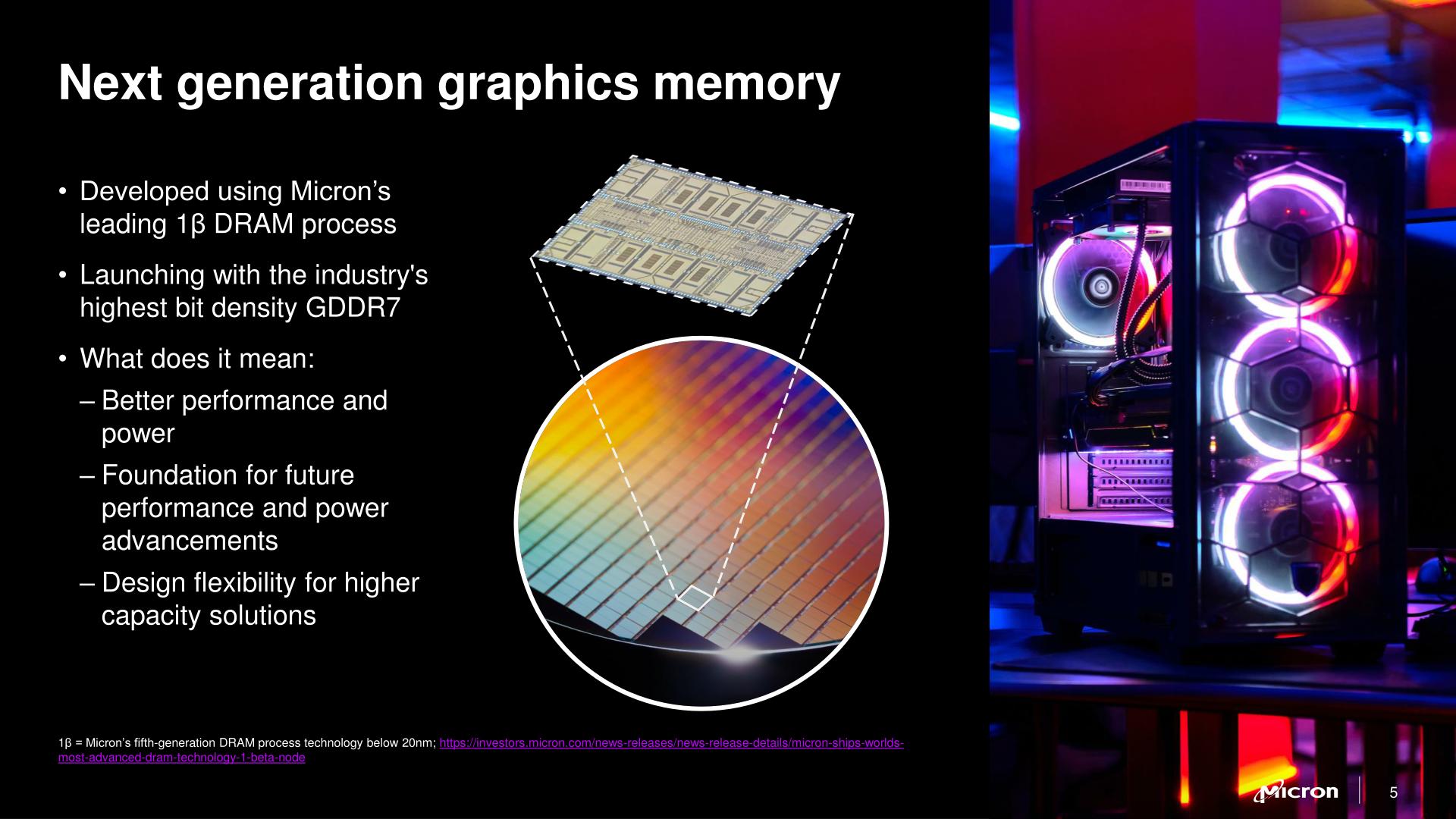

As for Micron's own production plans, the company is using its latest 1-beta (1β) fabrication process. While the major memory manufacturers don't readily publish the physical parameters of their processes these days, Micron believes that they have the edge on density with 1β, and consequently will be producing the densest GDDR7 at launch. And, while more nebulous, the company company believes that 1β will give them an edge in power efficiency as well.

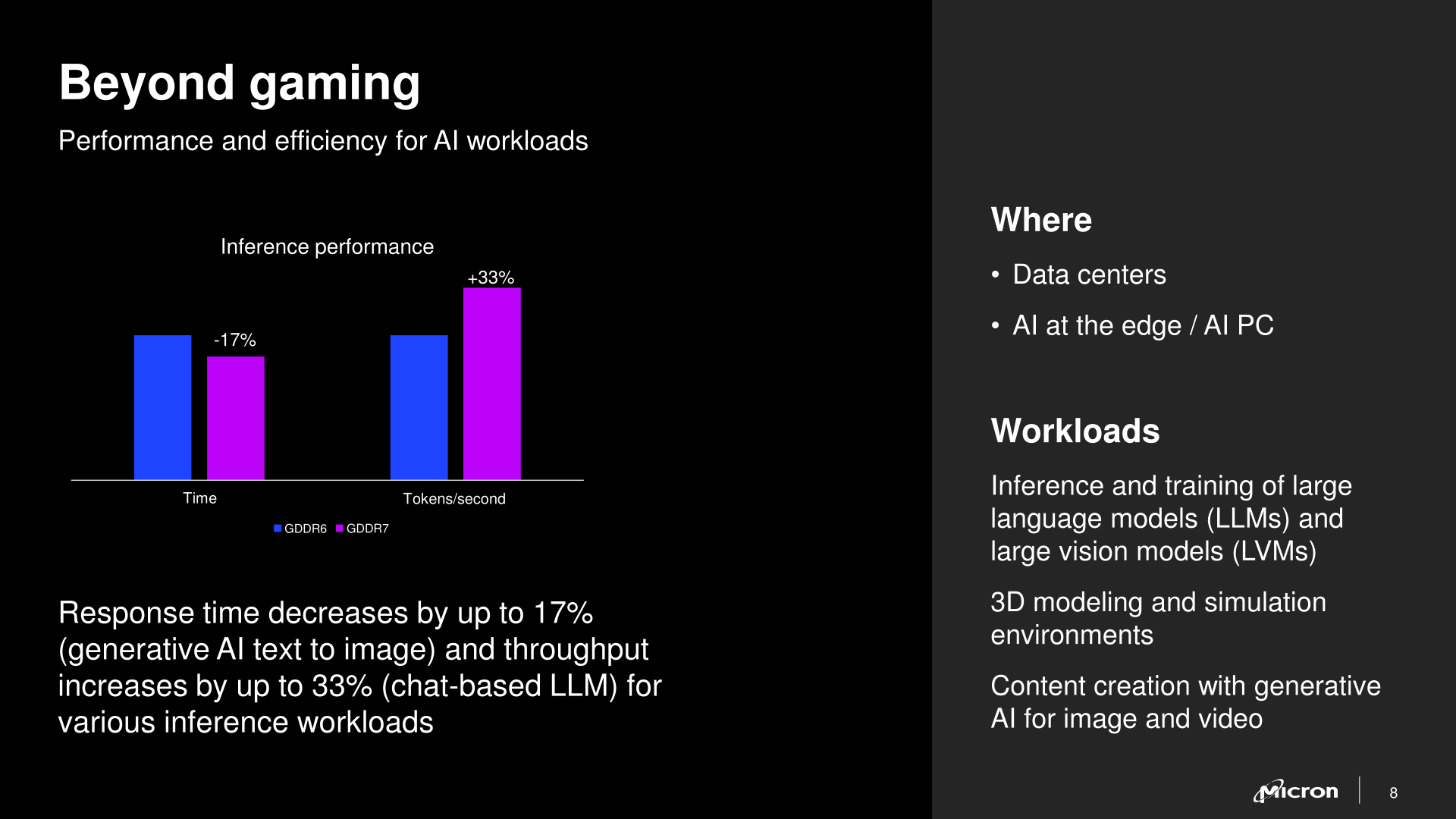

Micron says that the first devices incorporating GDDR7 will be available this year. And while video card vendors remain a major consumer of GDDR memory, in 2024 the AI accelerator market should not be overlooked. With AI accelerators still bottlenecked by memory capacity and bandwidth, GDDR7 is expected to pair very well with inference accelerators, which need a more cost-effective option than HBM.

Micron Hopes to Get to Mid-20% HBM Market Share with HBM3E

Speaking of HBM, Micron was the first company to formally announce its HBM3E memory last year, and it was among the first to start its volume shipments earlier this year. For now, Micron commands a 'mid-single digit' share of this lucrative market, but the company has said that it plans to rapidly gain share. If all goes well, by the middle of its calendar 2025 (i.e., the end of calendar Q1 2025) the company hopes to capture a mid-20% share of the HBM market.

"As we go into fiscal year 2025, we expect our share of HBM to be very similar to our overall share on general DRAM market," said Praveen Vaidyanathan, vice president and general manager of the Compute Products Group at Micron. "So, I would say mid-20%. […] We believe we have a very strong product as [we see] a lot of interest from various GPU and ASIC vendors, and continuing to engage with customers […] for the next, say 12 to 15 months."

When asked whether Micron can accelerate output of HBM3E at such a rapid pace in terms of manufacturing capacity, Vaidyanathan responded that the company has a roadmap for capacity expansion and that the company would meet the demand for its HBM3E products.

Source: Micron

15 Comments

View All Comments

CiccioB - Tuesday, June 11, 2024 - link

It's not clueless.If you want a fast GPU you need fast RAM or you GPU will starve for data whatever the size of the cache you are going to put on it

RAM speed depends on technology used.

PAM encoding is a step beyond the now insufficient NRZ coding mechanism. If you want fast transfer with decent current usage you need PAM.

Yes you can obtain the same performances using NRZ encoding but you'll need higher frequency and so higher signal levels with higher power consumption.

So the technology used for RAM determines its features such as speed and efficiency and real fast GPU needs them. Or they would not be so fast. Reply

Papaspud - Saturday, June 8, 2024 - link

Basically, they will be shipping new faster products and they expect to make significant inroads into the HBM market. I live near where they have their HQ in Boise Idaho, they have been building a 15 $ billion expansion to the complex, I would assume if they are/ were already investing that much money, they are pretty confident about the products coming down the pipeline. It looks like a pretty decent upgrade, but everything is all about AI....I personally at this point don't care about AI. Replycharlesg - Sunday, June 9, 2024 - link

AI is the latest hype to funnel gazillions of dollars into.IMHO it should be called FAI - fake artificial intelligence. So far it's not panning out to be very intelligent.

Regurgitating data with no concept of reality is not intelligence.

If I were a betting man, I'd say this FAI will become the new search engine.

Better, but still a search engine. Reply

FunBunny2 - Monday, June 10, 2024 - link

If I were a betting man, I'd say this FAI will become the new search engine.* another vote for higher order thinking - something 'college' used to instill in students, until the evangelical radical right wingnuts insisted on religious purity and vocational training as the only vectors that should exist.

AI, as currently implemented, is just a massive correlation matrix, and as such, benefits from massive amounts of memory. QED. Reply

haukionkannel - Monday, June 10, 2024 - link

Lets see how much gddr7 i crease gpu prices! Reply