Intel Confirms Working DX11 on Ivy Bridge

by Anand Lal Shimpi on January 10, 2012 10:51 AM EST- Posted in

- Trade Shows

- CPUs

- Intel

- DX11

- CES

- Ivy Bridge

- CES 2012

Yesterday we reported Intel ran a video of a DX11 title instead of running the actual game itself on a live Ivy Bridge notebook during Mooly Eden's press conference. After the press conference Intel let me know that the demo was a late addition to the presentation and they didn't have time to set it up live but insisted that it worked. I believed Intel (I spent a lot of time with Ivy Bridge at the end of last year and saw no reason to believe that DX11 functionality was broken) but I needed definitive proof that there wasn't some issue that Intel was trying to deliberately hide. Intel promptly invited me to check out the demo live on an Ivy Bridge notebook which I just finished doing.

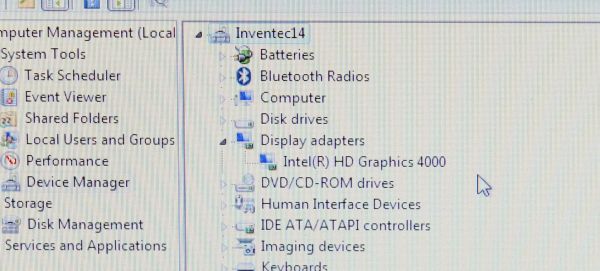

The notebook isn't the exact same one used yesterday (Mooly apparently has that one in some meetings today) but I confirmed that it was running with a single GPU that reported itself as Intel's HD Graphics 4000 (Ivy Bridge graphics):

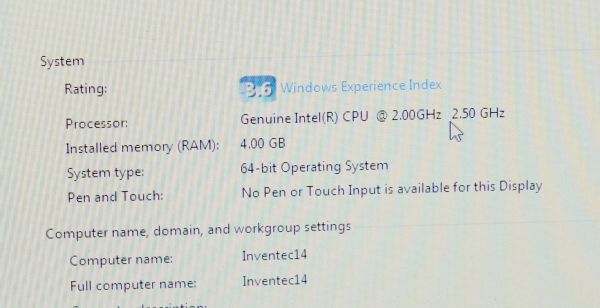

The system was running an IVB engineering sample running at 2.5GHz:

And below is a video of F1 2011 running live on the system in DirectX 11 mode:

Case closed.

50 Comments

View All Comments

Donnie Darko - Tuesday, January 10, 2012 - link

Re: Re 1: No. Turbo is a function that works when you have TDP overhead. When running a game the CPU cores are being hit continuously as well as the GPU so niether of them are in a low enough power state to allow the other to turbo.From Jarred and Anand's own review

"The sole victory for Intel comes in the lightly-threaded StarCraft II where Intel can really flex its Turbo Boost muscles."

So for any modern game (ie more than 2 threads [so DX10+ render path]) turbo cannot kick in for any APU solution. If turbo is working in the demo then it just means they are running DX9 code path with a sampling of DX11 simple features, which means they are lying anyways.

Re: Re 2&3: Correct. Nor is there a law that they cannot lie, cheat or change their mind/product specs when ever they choose (see new Atom loosing DX10.1 capability at launch). All companies over promise and under deliver (look at bulldozer). However my problem isn't with Intel. Anand has an obligation to accurately report the capabilities of the products he's testing. He doesn't have to (and doesn't frequently) but that violates the implicit agreement he has with his viewers. It's Ananad, who certainly knows these things have a large impact on capability, is covering for Intel at the expense of his readers.

To your comment, I'll take 'much' as 'must' and we'll go from there. I have no doubt that their production silicone (which is heavily binned) will be able to hit 2.5 GHz at 35W with the GPU running at X specs. The CPU will probably be able to turbo to over 3.0 GHz too, but the issue is that giving the CPU/GPU more thermal headroom allows you to take both parts up to higher performance. So even with final tweeking you'll never get the performance Anand reported on a 17W Ultrabook IVB. Physicis doesn't care about your personal preference.

So go ahead and enjoy some revisionist history if you want, but the facts remain Intel promised DX11 gaming on their Ultrabooks using a 17W IVB chip and will not deliver. Justify your personal preference all you want, but I'm not willing to buy into Atom 2.0 from Intel. My GF's mom's netbook is living up to the book part (sitting on the shelf) since it cannot do what it was promised to do. That was only a 300 mistake, the IVB Ultrabook will be a $1000 mistake for the poor people that listen to you and Anand.

extide - Wednesday, January 11, 2012 - link

Re #1: The screenshot with 2.5Ghz was in windows, not the game. Basically 100% guaranteed to be turbo. And since its only 2.0 -> 2.5 it matches the profile of a ULV part. If it was a high end chip it would turbo up much higher (>3.0Ghz).extide - Wednesday, January 11, 2012 - link

Also, they promised DX11 support, THATS IT.And ayone who buy ANY ultrabook expecting high performance gaming capability is going to be severely disappointed as NOBODY is advertising that capability, and NOBODY is claiming it.

Get back to reality dude.

extide - Wednesday, January 11, 2012 - link

Check this out, http://www.anandtech.com/show/5192/ivy-bridge-mobi...It is probably a i7-3667U

Base clock 2.0Ghz, with turbo @ 2.9-3.1Ghz depending on #/cores active. This is a 17w part.

therealnickdanger - Tuesday, January 10, 2012 - link

I wish we could all own 7970s, but we can't. My GTX 470 struggles with several games whether DX10 or DX11. It also lacks some features newer and more powerful GPUs feature. It doesn't mean it's not good or not worth owning. It also doesn't mean it's not a DX11 part. I have to adjust settings to match.Alexvrb - Friday, January 13, 2012 - link

Yeah, and your GTX 470 is several times more powerful than this. So if you're struggling, what does that say for the Intel GPU?MrSpadge - Tuesday, January 10, 2012 - link

Not if it's just a random hardware failure, which can happen to any product any time. You'd just need to get another one and it would work again.chizow - Tuesday, January 10, 2012 - link

of lip syncing the half time show during the Super Bowl?Seems a lot of wasted ink over something so trivial, but thanks for clearing up the non-controversy for good with the video Anand. :)

If Intel was making false claims about graphics performance it would've been exposed in a few months when IVB launched anyways.

Olbi - Monday, January 16, 2012 - link

in OpenGL too. In Mesa 8.0 they add not fully OpenGL 3.0 and they think, all is good. Sucks this shitty graphics from Intel. They cant even do good drivers for Linux, and they want DX11 on HD 4000? No more Intel IGP, only NVIDIA.artk2219 - Monday, January 16, 2012 - link

Nvidia isn't really an option as an IGP since they do not make chipsets for AMD or Intel anymore. So your only Nvidia option is as a discrete card which adds to the system power draw power draw and potentially lowering battery life, they do have hybrid graphics but still im not sure if that fits into the "ultrabook" big picture. Regardless im not really sure if they have a graphics core that fits into the same power envelope as something from intel or AMD.