BenQ XL2720T Gaming Monitor Reviewed

by Chris Heinonen on June 17, 2013 4:35 PM ESTWith TN panels you are of course subject to the problem of poor viewing angles in comparison to other monitor technologies. The problem also accentuates itself on larger displays like the XL2720T, where the viewing angle is larger for your vision, and so the edges start to have color shifts even when you are directly centered on the screen. Even with regular content on the screen, I can notice shifts in contrast and color at the edges of the screen in general use, which I find distracting. I didn’t notice it vertically as much, but the angle isn’t nearly as large as with the horizontal position.

I also found the overall look of the XL2720T to be a bit worse than with other displays I have had in recently. Perhaps it’s the anti-glare coating, but everything looks slightly fuzzy, even the simple black text in this document that I’m typing right now. With the lower resolution I’d expect to see more sharp angles and pixels, but everything looks a bit too smoothed out because of this issue. I have to say that for general use, I can’t imagine living without an IPS monitor at this point.

The BenQ has a lot of preset modes available for calibration, but the best data was obtained with the sRGB mode. Standard was a bit better for grayscale, but color accuracy was better in sRGB making it the overall best choice. Surprisingly, Photo mode was far and away the worst preset on the BenQ when it comes to accuracy, even more than the FPS modes are. Perhaps they meant to call it “Galaxy S3 Photo Mode”, but I’d usually expect a mode for viewing photos to be accurate. So just don’t use it, unless you want a really vivid Instagram filter applied to all your photos on your PC.

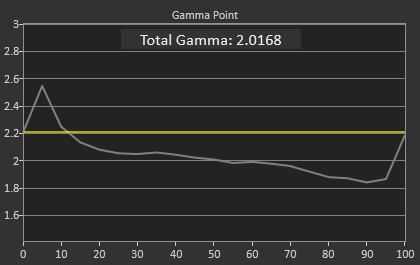

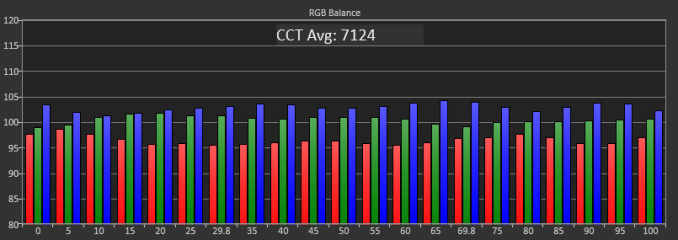

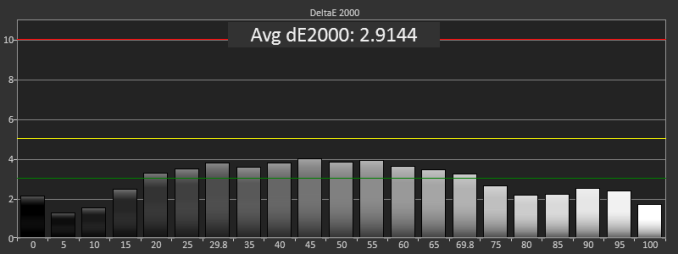

The uncalibrated grayscale performance for the BenQ is decent though not outstanding. The average dE2000 is just below 3.0, which is the visible threshold. As you look at the RGB balance you can see there is a distinct blue-green tint to the grayscale, which you can easily see on screen as well. The gamma comes out just above 2.0, well off our 2.2 target, and it isn’t linear at all which will lead to images that are a little dark in the shadows and too washed out in the highlights. The contrast ratio of 706:1 is on the low side, but the gamma issue really leads to a bit of a washed out image.

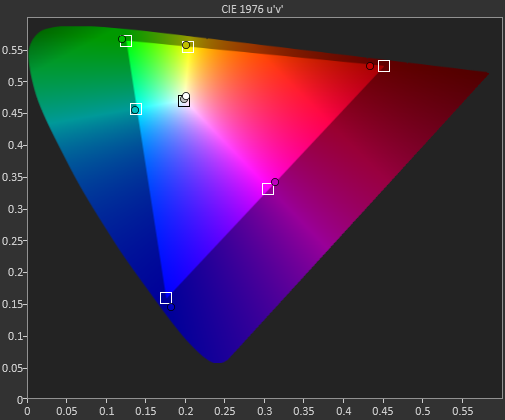

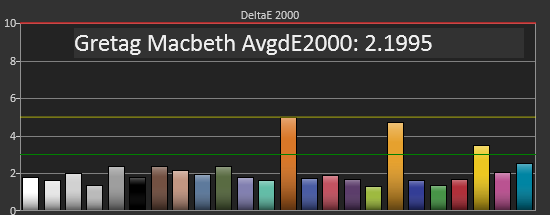

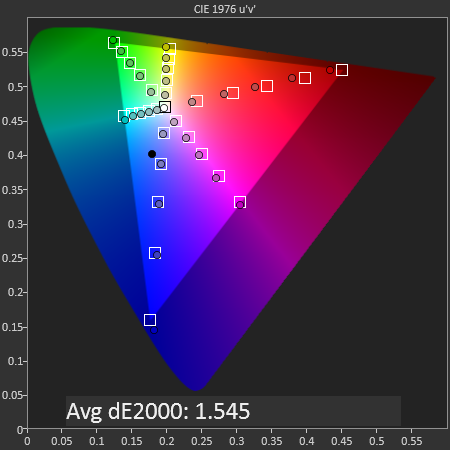

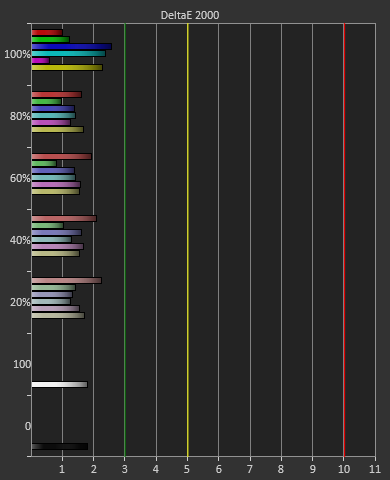

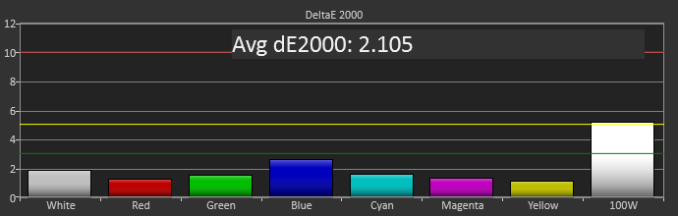

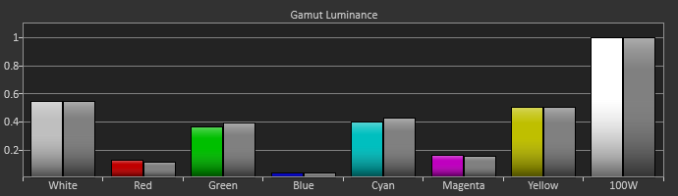

The color points are much better, with a dE2000 of 2.1 and most luminances are pretty close to correct, with all the colors having a dE2000 below 3. The worst issue is the 100% saturation, 100% luminance white that throws the average off a bit, and that will be noticeable as that’s your background white in most applications. Overall you get good numbers for a preset mode.

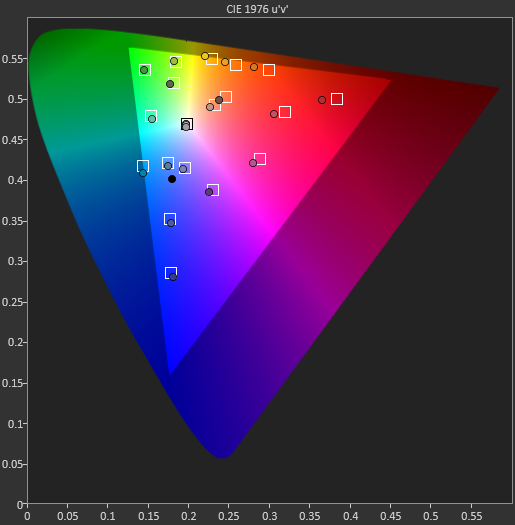

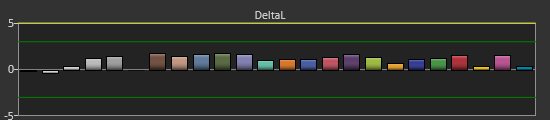

The colorchecker tests look good overall with the exception of two orange shades that are well off their target. Every other color has a color error that is practically invisible, as long as it isn’t right next to a correct sample. The biggest issue is that the luminance levels are too high across the board, and luminance is the most noticeable error in color.

Our saturations data is also very good, showing very uniform errors across the spectrum, and no really large spikes to be heavily concerned with. Overall for a pre-calibrated mode, the sRGB does a good job on the BenQ XL2720T.

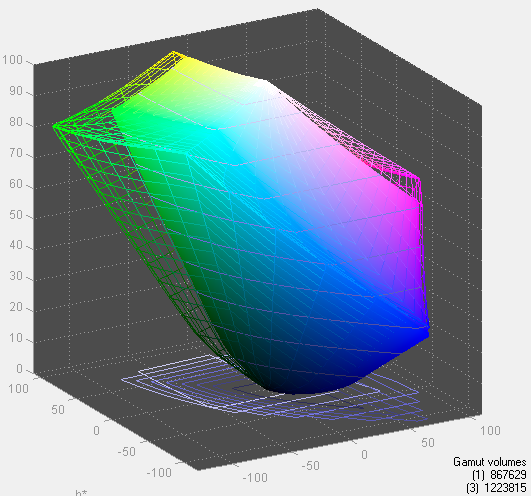

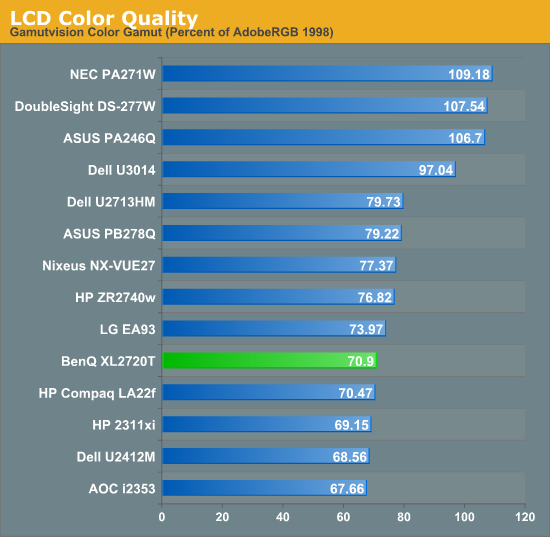

Looking at the gamut, we see just below 71% of the AdobeRGB gamut, which is what sRGB should measure out at.

79 Comments

View All Comments

chizow - Tuesday, June 18, 2013 - link

Glad to see you are revisiting the review of this monitor with a fresh outlook. Honestly, after you have your new results with LightBoost, you may consider just re-writing the entire review. It's a completely different market for this type of monitor that gets away from the muddy mosaics of finely pixeled high-res monitors.qiplayer - Saturday, November 9, 2013 - link

The today's problem is comunication. Companies want to throw stuff on the market and us to buy. Actually they have no idea what is our need expectations and so on. They lack on ability to observe. ...Today I saw the graps of gtx 780 gtx780ti gtx titan clock behaviour during heavy load/gameplay. Clock is high and every 45 seconds it drops by 40% =every 40 second you get fps drops. Is it possible that they sell a card for 1000$ and haven't found a better solution? YES, they (nvidia) probably even didn't notice it. It would have been enough to let the max clock be 3-5% lower to avoid the needing to downcloack.So yes they produce and hope to sell, they found out the solution that many gamers aspire to have in a monitor by case, by looking for a better 3D.

And if we look cell phones, I'm since years looking for something that is not an iphone, but I always felt I can't type half that fast with samsung htc and so on because the keys had a lag lighting up when pressed. For years this aspect hasn't been seen in reviews, then, one day "tadaaahhh" revelation: a test of touchscreen responsetime. Iphone was far better than the best samsung. So is it possible that no one of the thousands worldwide reviewers noticed it? No one engineer?

cheinonen - Wednesday, June 19, 2013 - link

I've added charts and data on Lightboost to the review, as well as commentary on the image quality of it. They can all be found on the gaming use and lag page.chizow - Wednesday, June 19, 2013 - link

"One thing this does do it lock out all the picture controls except color and brightness."It only locks you out once you enable LightBoost, prior to that you can make any adjustments to presets, color, temperature, sharpness etc. At least that is how it worked on my 2 previous 3D Vision monitors.

Also, you shouldn't lose any brightness, if anything, the image is usually too bright for those who are not accustomed to it as brightness is maxed out and locked and strobing at 2x brightness.

Anyone interested in the truly important vitals of this monitor should follow mdrejhon's links as they give a much more accurate picture of this monitor's strengths.

cheinonen - Wednesday, June 19, 2013 - link

You're going to lose brightness because it's working with black frame insertion basically to mimic the performance of a CRT in that way. The lack of object permanence on-screen causes the eye to see motion as being more fluid when tracking something. This same behavior also means that instead of the backlight being on all the time, it's shut down every so often and that causes a measurable drop in light output. With the monitor at factory settings, other than Lightboost, and contrast set to the maximum level before clipping, I got under 130 cd/m2. Going higher requires clipping.As far as the user controls being locked out, at least on the BenQ when it went into Lightboost mode my previously set user color temperature settings were removed, leading to the blue image. Different monitors could easily have different settings, but on the BenQ they were all locked out.

I've read all of mdrejhon's links, and he's providing his perspective. He, and many others, are after the least amount of blur no matter what, and that's fine. To me, the trade-off in brightness, color quality, and noticeable flicker are not worth it to me. But that's my opinion. However I think comments that color quality is unimportant compared to the 120Hz mode is giving BenQ a free pass. They could easily provide both, but choose not to due to cost or another reason. If color accuracy isn't important to you at all, that's fine, but it shouldn't be dismissed outright.

sweenish - Monday, June 17, 2013 - link

180-220 is a 10% range, is it not?brucek2 - Monday, June 17, 2013 - link

Thanks Chris. I really appreciate direct, straight forward conclusions like yours. The most important part of most reviews to me is the part that says this product is either the winner for some specific use case, or if not here are the likely better options to consider. Far too many reviewers are afraid to be so blunt so I always appreciate it when I see it.mdrejhon - Tuesday, June 18, 2013 - link

To be fair, SkyViper (a former CRT user) thought a 120Hz monitor was disappointing until he enabled LightBoost:http://hardforum.com/showthread.php?p=1039591537#p...

"I felt like I may have wasted $300 bucks on a monitor that is full of compromises. The next thing I tried of course was using the Lightboost hack."

[...Enables LightBoost....]

“SWEET MOTHER OF GOD!

Am I seeing this correctly? The last time I gamed on a CRT monitor was back in 2006 before I got my first LCD and this ASUS monitor is EXACTLY like how I remembered gaming on a CRT monitor. I was absolutely shocked and amazed at how clear everything was when moving around. After seeing Lightboost in action, I would have gladly paid twice the amount for something that can reproduce the feeling I got when playing on a CRT. Now I really can’t see myself going back to my 30″ 2560×1600 IPS monitor when gaming. Everything looks so much clearer on the ASUS with Lightboost turned on.”

Panzerknacker - Monday, June 17, 2013 - link

Agree with your conclusion Chris, TN monitors are just completely worthless for anything, looking at them makes me puke. Many years ago I used to be a hardcore gamer, but when my CRT died and I was forced to purchase a LCD monitor, that completely spoiled the fun I had in gaming. Everything suddenly looked like complete crap, especially the dark atmospheric games I used to enjoy like Doom 3 and Splinter Cell. Displays like this are maybe only good for one thing, and that is proffesional gaming tournaments where nothing matters but respons. Even while practicing at home though I would prefer the better looks of a IPS over the faster respons of the TN.offtopic:

How do modern IPS screens do in terms of allround gaming vs CRT's? I still use to think all LCD's just suck, I just bought a phone with the best display on the planet and everybody and every review says it has the most awesome black levels ever in a LCD, but imo it still sucks compared to CRT.

Insomniator - Monday, June 17, 2013 - link

TN panels ruined gaming for you? Give me a break.