AMD Launches Mobile Kaveri APUs

by Jarred Walton on June 4, 2014 12:01 AM ESTAMD Kaveri FX-7600P GPU Performance Preview

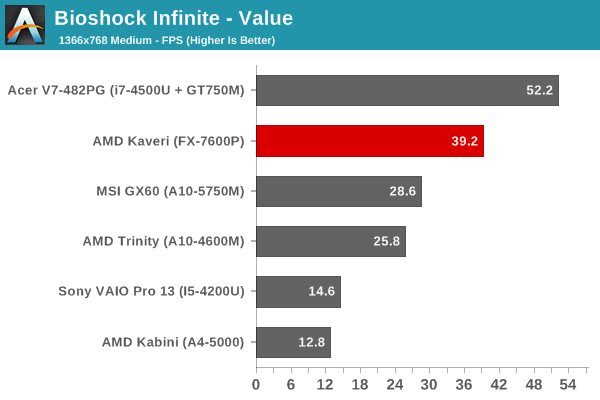

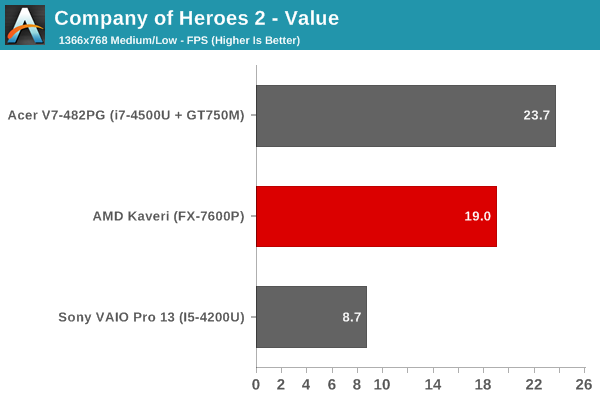

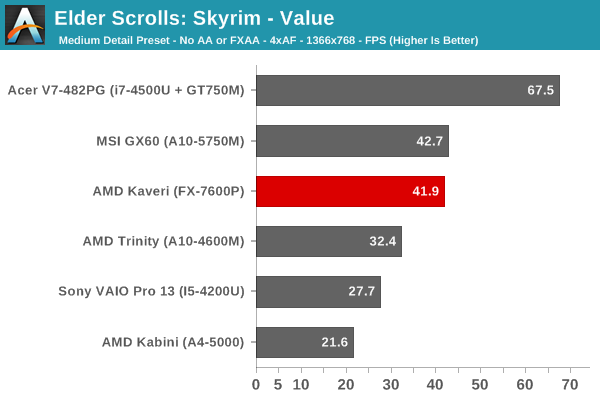

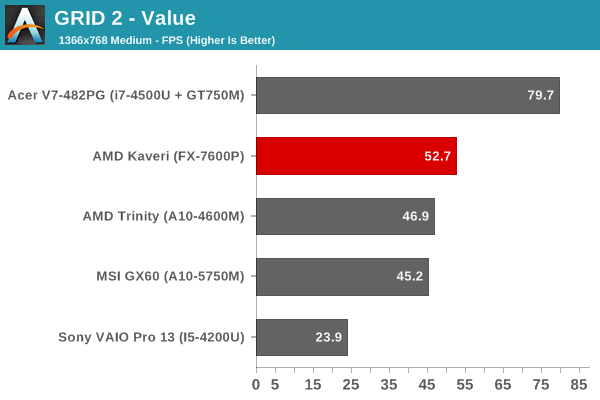

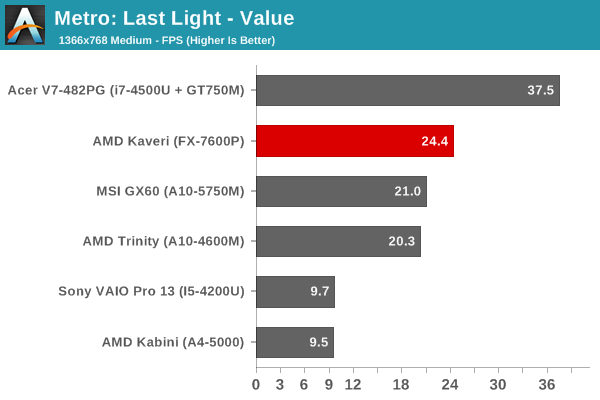

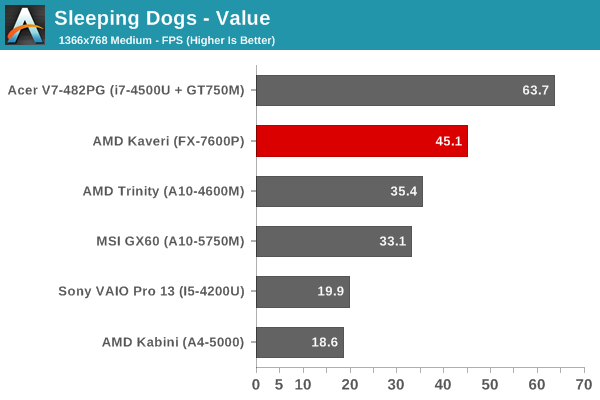

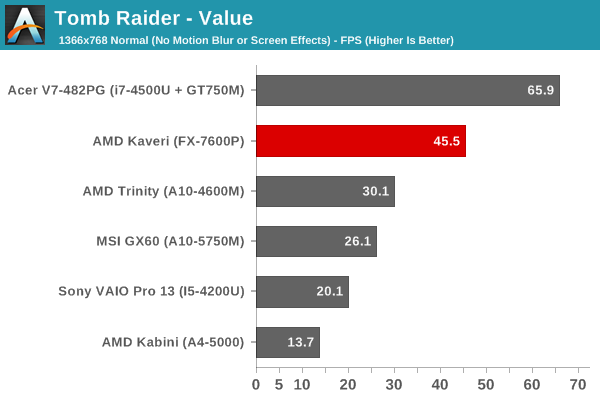

Given the 3DMark results we just showed as well as the increase in CPU performance, I was very interested to see what Kaveri could do in terms of gaming performance. Here I have to temper my comments somewhat by simply noting that the graphics drivers on the prototype laptops did not appear to be fully optimized. One game in particular that I tested (Batman: Arkham Origins) seemed to struggle more than I expected, and there are other games (Metro: Last Light and Company of Heroes 2) that will bring anything short of a mainstream dGPU to its knees. I've posted the Kaveri Mainstream and Enthusiast scores in Mobile Bench, but they're not particularly useful as most of the scores are below 30 FPS. Here, I'll focus on our "Value" settings, which are actually still quite nice looking (Medium detail in most games).

As expected, in most of the tests the Kaveri APU is able to surpass the gaming performance of every other iGPU, and in some cases it even comes moderately close to a discrete mainstream dGPU. There's a sizeable gap between the Trinity/Richland APUs and Kaveri in most of the games I tested, which is great news for those looking for a laptop that won't break the bank but can still run most modern games.

Getting into the particulars, Skyrim seems to be hitting some bottleneck (possibly CPU, though even then I'd expect Kaveri to be faster than Richland), but the vast majority of games should run at more than 30 FPS. There was one system with a pre-release Mantle driver installed that was running Battlefield 4 reasonably well at low/medium details, and with shipping laptops and drivers (and perhaps DDR3-2133 RAM) I suspect even Metro might get close to 30 FPS. Of course, we're only looking at the top performance FX-7600P here, so we'll have to see what the various 19W APUs are able to manage in similar tests.

125 Comments

View All Comments

xenol - Wednesday, June 4, 2014 - link

TDP doesn't equal power consumption. It equals how much heat a cooling unit must dissipate for safe thermal levels of operation. While there is some correlation, as more TDP generally means higher power consumption, it's not a direct one.Galatian - Wednesday, June 4, 2014 - link

I think it actually pretty much does equal top power draw, since energy in pretty much equals heat out. But do correct me if I don't understand the physics correctly. To me it simply seems like no work being done.JarredWalton - Wednesday, June 4, 2014 - link

TDP means the maximum power that needs to be dissipated, but most CPUs/APUs are not going to be pushing max TDP all the time. My experience is that in CPU loads, Intel tends to be close to max TDP while AMD APUs often come in a bit lower, as the GPU has a lot of latent performance/power not being used. However, with AMD apparently focusing more on hitting higher Turbo Core clocks, that may no longer be the case -- at least on the 19W parts. Overall, for most users there won't be a sizable difference between a 15W Intel ULV and a 19W AMD ULV APU, particularly when we're discussing battery life. Neither part is likely to be anywhere near max TDP when unplugged (unless you're specifically trying to drain the battery as fast as possible -- or just running a 3D game I suppose).Galatian - Wednesday, June 4, 2014 - link

Yes, which is why I said it equal top power draw ;-)JarredWalton - Wednesday, June 4, 2014 - link

Yeah, my response was to this thread in general, not you specifically. :-)nevertell - Wednesday, June 4, 2014 - link

It's amazing that we live in a world where information is accessible on a whim to most people living in the western world, yet even on a website that caters to more educated people (or so I would think), people have problems understanding even the simplest concepts that enable them to expose themselves to this medium. Energy is never lost, it's just used up in different ways. Essentially if we had access to a superconductive material to replace lines and a really efficient transistor, we would have a SoC that's TDP is zero watts. Say, a chip does not move a thing, there is no mechanical energy involved, all of the energy is wasted as heat. Why ? Electricity at it's core is flow of charged particles through a medium. If this medium is copper and the particles are electrons, the only thing standing in the way of the electrons flowing are the copper atoms. The electrons will occasionally bump into the atoms, exchanging kinetic energy, making the atom in question move. As the atoms move faster (i.e. their kinetic energy increases), collisions become more likely to occur, and so they do. In other words, the conductors resistance increases. What scale do we use to measure the movement of atoms ? Temperature! Heat is literally the average amount of kinetic energy of every atom of piece of thing has. Thereby all of the energy that is used to power electronics just goes to waste. Kind of.ol1bit - Wednesday, June 4, 2014 - link

Unless you live in a cold climate, then you get to use part of the energy as Heat! :-)Galatian - Thursday, June 5, 2014 - link

I'm not sure who you are responding too. Nobody said energy is lost. The discussion was first about AMD TDP not being the same as Intel TDP and ten switched over to a discussion of TDP not actually meaning power draw, which by itself is true, but there obviously is a correlation which a several posters (yourself included with a more physical explanation) talked about .johnny_boy - Saturday, June 7, 2014 - link

Compare performance per watt in gaming and Intel stops looking impressive. If you're buying a notebook with the FX chip then that should be what you care about.bji - Wednesday, June 4, 2014 - link

The comparison is for CPUs in the same price range, not CPUs in the same TDP range, obviously.So the performance is decent for the price, as gdansk correctly pointed out. It is not decent for the TDP, at least not compared to Intel's chips, which is what you are focusing on, and is not the metric that most people use when comparing processors.