Advatronix Cirrus 1200: a Storage Server Under Your Desk

by Johan De Gelas on June 6, 2014 5:00 AM ESTLow latency database transactions test

Before we can start comparing the Cirrus 1200 to an alternative configuration, we must find out how we should configure our system. Indeed there are two cache levels, the RAM cache of the controller and the SSD cache, and both can be set to cache reading and/or writing. That gives us eight different configurations, though not all of them make sense of course.

There is a quirk in the Adaptec software. We first enabled Maxcache on a single SSD. Adaptec asked us whether we are sure (as SSDs can fail too) and when we answered "yes", all the software (BIOS and Maxview) reported proudly that SSD caching was enabled. It turned out that this was not the case at all. Only when we set the SSD to RAID-1 was Maxcache (SSD caching) properly enabled.

The results are somewhat surprising: once the Maxcache works properly, it seems that the RAID controller cache just adds latency. The RAID controller does help when the SSD does not accelerate writing, but with Maxcache active on the SSDs the RAID controller caching slows things down a bit.

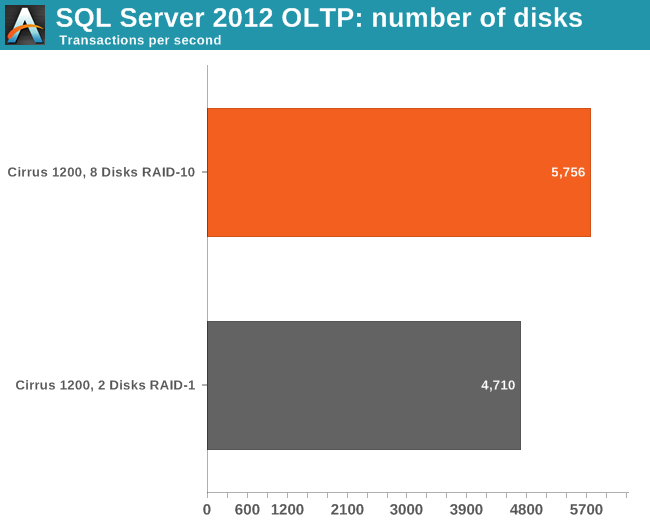

The next question that we asked ourselves is whether it still matters to have lots of spinning disks behind the SSD cache. After all, the SSD cache seems to be doing all the hard work. So we replaced the eight Seagate 4TB drives in RAID-10 with a two disk system using RAID-1.

The performance gain of using eight spindles instead of two is pretty small, but it is still measureable. We show a 22% increase in the total number of transactions. In most small businesses this performance increase will not be enough to convince people to use this many disks on for a database. The RAID-1 setup is probably better as more disks can then be used for serving files and documents. This way, the storage capacity of the file server can be a lot bigger, which is a huge advantage. In most enterprises, file server capacity will be a much higher priority than a few procentagaes of extra database performance.

39 Comments

View All Comments

thomas-hrb - Friday, June 6, 2014 - link

If you looking at storage servers under the desk why not consider something like the DELL VRTX. that at least have a significant advantage in the scalability department. You can start small and re-dimension to many different use cases as you growJohanAnandtech - Friday, June 6, 2014 - link

Good suggestion, although the DELL VRTX is a bit higher in the (pricing) food chain than the servers I described in this article.DanNeely - Friday, June 6, 2014 - link

With room for 4 blades in the enclosure the VRTX is also significantly higher in terms of overall capability. Were you unable to find a server from someone else that was a close match in specifications to the Cirrus 1200? Even if it cost significantly more, I think at least one of comparison systems should've been picked for equivalent capability instead of equivalent pricing.jjeff1 - Friday, June 6, 2014 - link

I'm not sure who would want this server. If you have a large SQL database, you definitly need more memory and better reliability. Same thing if you have a large amount of business data.Dell, HP or IBM could all provide a better box with much better support options. This HP server supports 18 disk slots, 2 12 core CPUs, and 768GB memory.

http://www8.hp.com/us/en/products/proliant-servers...

It'll cost more, no doubt. But if you have a business that's generating TBs of data, you can afford it.

Jeff7181 - Sunday, June 8, 2014 - link

If you have a large SQL database, or any SQL database, you wouldn't run it on this box. This is a storage server, not a compute server.Gonemad - Friday, June 6, 2014 - link

I've seen U server racks on wheels, with a dark glass and keys locking it, but that was just an empty "wardrobe" where you would put your servers. It was small enough to be pushed around, but with enough real estate to hide a keyboard and monitor in there, like a hypervisor KVM solution. On the plus side, if you ever decided to upgrade, just plop your gear on a real rack unit. It felt less cumbersome than that huge metal box you showed there.Then again, a server that conforms to a rack shape is needed.

Kevin G - Friday, June 6, 2014 - link

Actually I have such a Gator case. It is sold as a portable case for AV hardware but conforms to standard 19" rack mount widths and hole mounts. There is one main gotcha with my unit: it does't provide as much depth as a full rack. I have to use shorter server cases and they tend to be a bit taller. It works out as the cooling systems of taller rack cases tend to be quieter and an advantage when bring them to other locations An more of a personal preference thing but I don't use sliding rails in a portable case as I don't see that as wise for a unit that's going to be frequently moved around and traveling.martixy - Friday, June 6, 2014 - link

Someone explain something to me please.So this is specifically low-power - 500W on spec. Let's say then that it's a non-low-power(e.g. twice - 1kW). I'm gonna assume we're threading on CRAC territory at that point. So why exactly? Why would a high powered gaming rig be able to easily handle that load, even under air cooling, but a server with the same power factor require special cooling equipment with fancy acronyms like CRAC?

alaricljs - Friday, June 6, 2014 - link

A gaming rig isn't going to be pushing that much wattage 24x7. A server is considered a constant load and proper AC calculations even go so far as to consider # of people expected in a room consistently, so a high wattage computer is definitely part of the equation.DanNeely - Friday, June 6, 2014 - link

I suspect it's mostly marketing BS. One box even a high power one that's at a constant 100% load doesn't need special cooling. A CRAC is needed when you've got a data center packed full of servers because they collectively put out enough heat to overwhelm general purpose AC units. (With the rise of virtualization many older data centers capacity has become a thermal limit instead of being limited by the number of racks there's room for.)At the margin they may be saying it was designed with enough cooling to keep temps reasonable in air on the warm side of room temperature instead of only when it's being blasted with chilled air. OTOH a number of companies that have experimented with running their data centers 10 or 20F hotter than traditional have found the cost savings from cooling didn't have any major impact on longevity so...