AMD A10-7800 Review: Testing the A10 65W Kaveri

by Ian Cutress on July 31, 2014 8:00 AM ESTGaming and Synthetics on Processor Graphics

The faster processor graphics become, the more of the low end graphics market is consumed - if the integrated graphics are better than a $50 discrete GPU, there ends up being no reason to buy a discrete GPU. This might seem a little odd for AMD, who also have a discrete GPU business. The counter argument is that integrated graphics is only comparable to low-end GPUs, which are historically low margin parts and thus might encourage users to invest in larger GPUs, especially as demands in resolution and graphical eye-candy increase. The compute side is also important, and the homologation of discrete to integrated graphics architectures helps software optimised for one also be accelerated on the other.

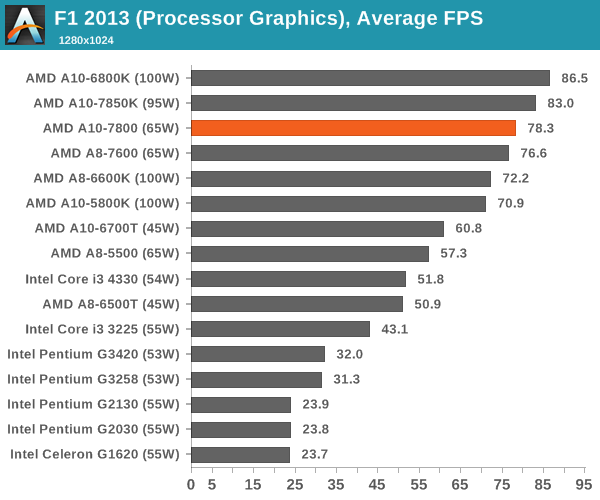

F1 2013

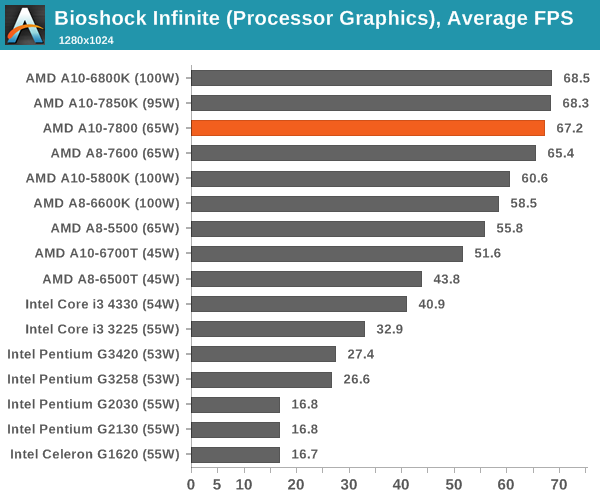

Bioshock Infinite

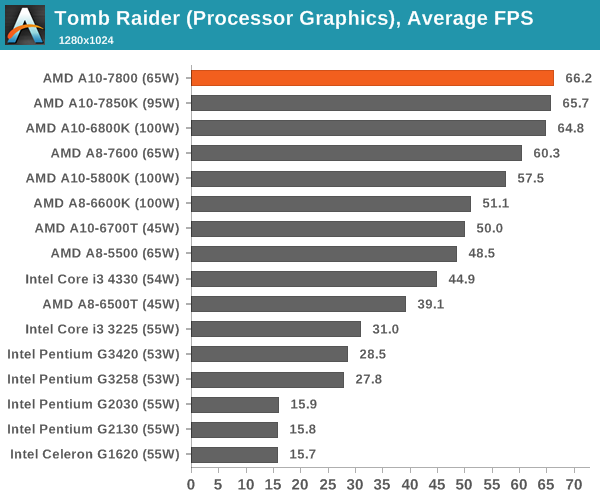

Tomb Raider

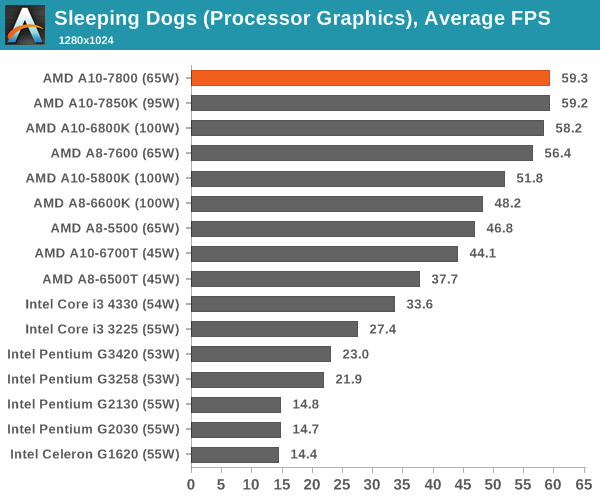

Sleeping Dogs

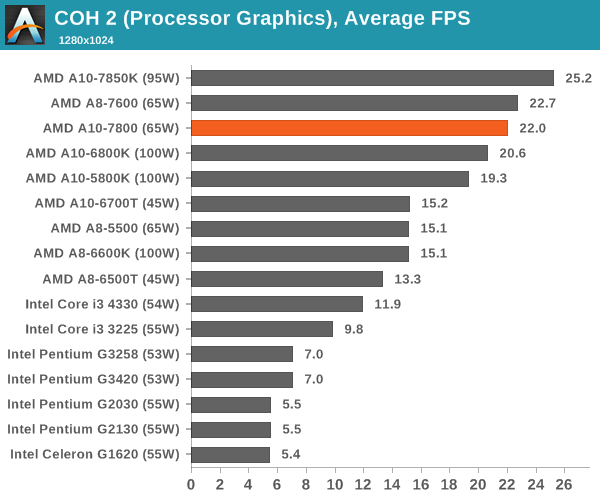

Company of Heroes 2

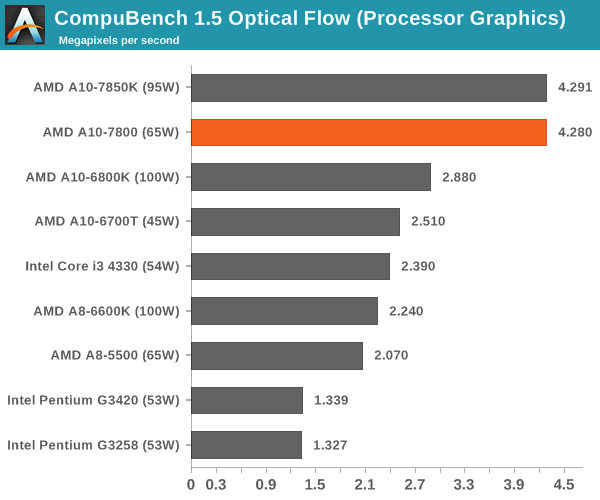

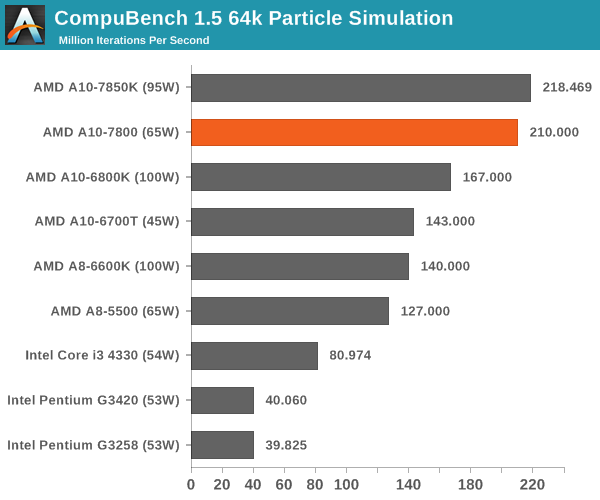

CompuBench 1.5

CompuBench is a new addition to our CPU benchmark suite, and as such we have only tested it on the following processors. The software uses OpenCL commands to process parallel information for a range of tests, and we use the flow management and particle simulation benchmarks here.

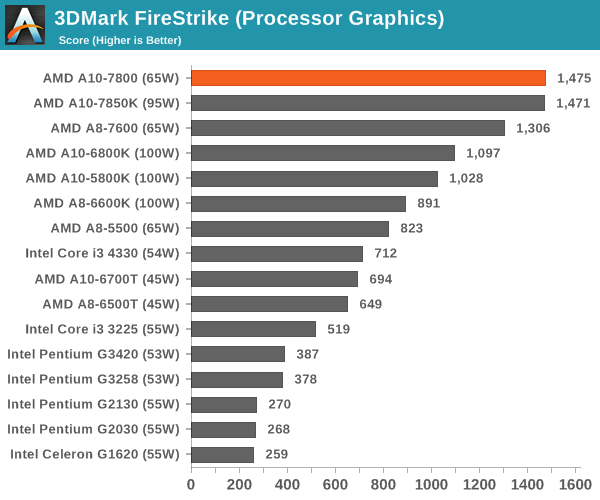

3DMark Fire Strike

The simple answer is this: for anything related to processor graphics, AMD's Kaveri wins hands down and by a large margin in the same power envelope for cheaper.

147 Comments

View All Comments

edlee - Thursday, July 31, 2014 - link

Nice review, but if I had a budget gaming machine, I would buy an inexpensive i5 with a better gpuRais93 - Thursday, July 31, 2014 - link

You do not understand what budget or inexpensive mean. An i5 complete platform costs few more than an a10 system, but for many people few dollars mattersnathanddrews - Thursday, July 31, 2014 - link

AMD A10-7850K - $170Decent FM2+ A88X mobo - $70 ($40-100)

Total base cost $240

Intel i5-4590S - $170

Decent 1150 H87 mobo - $70 ($30-400)

Total base cost $240

Add any identical dGPU, RAM, HDD/SSD, case, PSU, OS and the Intel system will dominate in every scenario for the same price.

http://www.anandtech.com/bench/product/1200?vs=119...

If no dGPU is allowed, then the AMD wins (in games and OCL/HSA only)... but would you seriously NEVER add a dGPU, even a year or two later? The moment that you do, you've essentially wasted your money on AMD. That's why the budget argument never makes much sense to me. You'll spend more over time buying ineffective budget rigs than you would if you spent more less often.

Flunk - Thursday, July 31, 2014 - link

If you're comparing Intel and AMD, as soon as you add the dGPU there is no reason to buy AMD. The CPU portion of the A10 is more comparable to an i3 than an i5 (and much worse single-thread perfomrance), you're paying for the iGPU more than anything.AMD's A-series processors make the most sense in cheap laptops that would never get a dGPU. Half-decent GPU and CPU performance make sense there. But if you're talking desktop, the second you add a GPU the value proposition flies out the window.

silverblue - Thursday, July 31, 2014 - link

There IS the option of Crossfire with an R7 but you can't rely on it to always boost performance. Still, it's a better proposition than in the past.Samus - Thursday, July 31, 2014 - link

AMD CPU power efficiency is also a 2.4:1 ratio to Intel.For example, AMD's FX-4350 is the most competitive CPU they have (in price) to an i3-4330. The i3 completely destroys it in most benchmarks, yet it uses 54w and the FX uses 125w.

That's anywhere from $8-$15/year in power usage depending on the workload. Ammortize that over 4-5 years and you may have just spent $50 more in electricity on the AMD CPU. This doesn't account for additional HVAC costs (air condition needs to run more to cool a room with AMD CPU's, increasing energy usage even more)

It's the same fact over and over. AMD is irrelevant because of their dinosaur manufacturing capabilities. They're always 2 generations behind Intel.

Gigaplex - Thursday, July 31, 2014 - link

Your electricity cost calculations assume 100% CPU load 100% of the time. Completely unrealistic.Samus - Friday, August 1, 2014 - link

Did you really not even read my comment? I clearly stated a range for a users workload. I didn't just give a universal figure.$8-$15 is the range from average workload to 100% workload. And that's assuming you're rate is $0.08/kwh. Parts of the country, such as Socal, are upward of $0.24/kwh. This means, at full load, AMD CPU's would cost about $1.00/day if run at full load 24/7, an extreme scenario, but the point was they use around 2.4x more power.

Unfortunately for AMD, that figure isn't a consistent scale in their favor; AMD CPU's idle at nearly quadruple the power of Intel's because they have no high-K dialetric to completely powergate unutilized sections of a core. All they can do is shut cores off, meaning one full core will always be on.

I was focusing on 100% workload simply because that's the least embarrassing presentation for AMD.

Audiolectric - Friday, August 1, 2014 - link

Well, different benchmarks taken by computerbase.de show that the 7800 actually idles at lower power consumption than the i3.From my own experience i can verifiy that, but only for mobile CPUs/APUs. I did own a msi gx60 and a gt60, and the gx60 was giving me about 30% more runtime from the battery.

and except for compiling the linux kernel, i couldnt tell any difference in everyday performance. (Of Courage in some games the a10 5750m was limiting performance when the i7 3632qm did not)

silverblue - Friday, August 1, 2014 - link

Well, one module would still be on, meaning two integer cores still being active. Still, there's nothing stopping a user from undervolting them and making significant power savings.