Hidden Secrets: Investigation Shows That NVIDIA GPUs Implement Tile Based Rasterization for Greater Efficiency

by Ryan Smith on August 1, 2016 5:00 AM EST

As someone who analyzes GPUs for a living, one of the more vexing things in my life has been NVIDIA’s Maxwell architecture. The company’s 28nm refresh offered a huge performance-per-watt increase for only a modest die size increase, essentially allowing NVIDIA to offer a full generation’s performance improvement without a corresponding manufacturing improvement. We’ve had architectural updates on the same node before, but never anything quite like Maxwell.

The vexing aspect to me has been that while NVIDIA shared some details about how they improved Maxwell’s efficiency over Kepler, they have never disclosed all of the major improvements under the hood. We know, for example, that Maxwell implemented a significantly altered SM structure that was easier to reach peak utilization on, and thanks to its partitioning wasted much less power on interconnects. We also know that NVIDIA significantly increased the L2 cache size and did a number of low-level (transistor level) optimizations to the design. But NVIDIA has also held back information – the technical advantages that are their secret sauce – so I’ve never had a complete picture of how Maxwell compares to Kepler.

For a while now, a number of people have suspected that one of the ingredients of that secret sauce was that NVIDIA had applied some mobile power efficiency technologies to Maxwell. It was, after all, their original mobile-first GPU architecture, and now we have some data to back that up. Friend of AnandTech and all around tech guru David Kanter of Real World Tech has gone digging through Maxwell/Pascal, and in an article & video published this morning, he outlines how he has uncovered very convincing evidence that NVIDIA implemented a tile based rendering system with Maxwell.

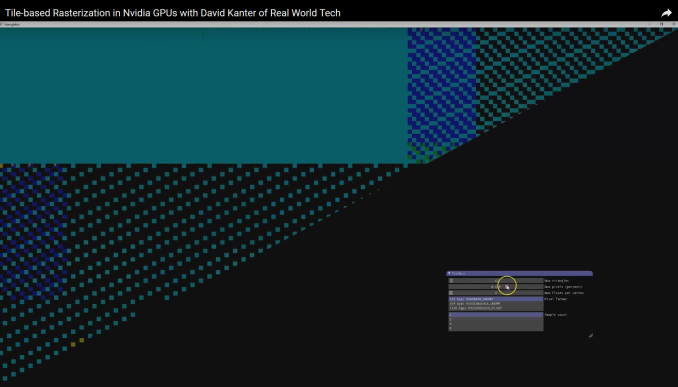

In short, by playing around with some DirectX code specifically designed to look at triangle rasterization, he has come up with some solid evidence that NVIDIA’s handling of tringles has significantly changed since Kepler, and that their current method of triangle handling is consistent with a tile based renderer.

NVIDIA Maxwell Architecture Rasterization Tiling Pattern (Image Courtesy: Real World Tech)

Tile based rendering is something we’ve seen for some time in the mobile space, with both Imagination PowerVR and ARM Mali implementing it. The significance of tiling is that by splitting a scene up into tiles, tiles can be rasterized piece by piece by the GPU almost entirely on die, as opposed to the more memory (and power) intensive process of rasterizing the entire frame at once via immediate mode rendering. The trade-off with tiling, and why it’s a bit surprising to see it here, is that the PC legacy is immediate mode rendering, and this is still how most applications expect PC GPUs to work. So to implement tile based rasterization on Maxwell means that NVIDIA has found a practical means to overcome the drawbacks of the method and the potential compatibility issues.

In any case, Real Word Tech’s article goes into greater detail about what’s going on, so I won’t spoil it further. But with this information in hand, we now have a more complete picture of how Maxwell (and Pascal) work, and consequently how NVIDIA was able to improve over Kepler by so much. Finally, at this point in time Real World Tech believes that NVIDIA is the only PC GPU manufacturer to use tile based rasterization, which also helps to explain some of NVIDIA’s current advantages over Intel’s and AMD’s GPU architectures, and gives us an idea of what we may see them do in the future.

Source: Real World Tech

191 Comments

View All Comments

Scali - Monday, August 1, 2016 - link

Must be something in that '480' name... The RX480 was also a disaster, breaking PCI-e power limits.Alexvrb - Monday, August 1, 2016 - link

People break the PCI-E limit all the time. It was never something people freaked out about. Anyway they released the fix in like a week. If it wasn't something they could fix, then they'd have been in trouble and would have had to offer a recall. With that said they needed to get their eyes drawn to the issue so they could correct it, but it was hardly a disaster. More like a footnote. These days I get aftermarket OC models and sometimes OC them some more. Probably breaking spec in the process.StrangerGuy - Tuesday, August 2, 2016 - link

Nobody could care less if specs are broken by OCing but It's always funny when somebody can claim anything like "breaking PCI-E limit all the time" as gospel when no card at stock, custom or not, so far has been proven to do that except the unfixed reference RX480.Scali - Tuesday, August 2, 2016 - link

"With that said they needed to get their eyes drawn to the issue so they could correct it"Wait, you're saying that a company such as AMD doesn't actually test the powerdraw on its parts in-house, before putting them on the market, and they need to rely on reviewers to do it for them, to point out they're going out-of-spec on the PCI-e slot, because there's no way AMD could have tested this themselves and found that out?

Heh. What I think happened is this: AMD tested their cards, as any manufacturer does... They pushed the card to the power limits, and slightly beyond, to eek as much performance out of it as possible, because they need everything they can get in this race with NVidia. They then said: "Nobody will notice", and put it on the market like that.

Problem is, people did notice.

silverblue - Tuesday, August 2, 2016 - link

Sadly, much like the GTX 970's memory clocks.Alexvrb - Wednesday, August 3, 2016 - link

I didn't say they weren't aware. I said they needed their eyes drawn to the issue = needed their attention focused on it. Kind of like Nvidia and the 3.5GB debacle. They KNEW all along. The difference is one was fixed in a week with a driver (thanks to all the negative attention) and one is permanent. Yet the same people slamming AMD for their screwup here would gladly defend Nvidia's slip.The reason I brought up OCing is that I'm trying to point out that it is done all the time and it's not as big of a deal as the frothing anti-AMD boys would have you believe. Oh and there HAVE been custom models that broke PCI-E limits as configured by the manufacturer. Nobody batted an eye. Many users broke the limit even further by bumping clocks further.

Ro_Ja - Wednesday, August 10, 2016 - link

With the GTX 750 Ti also doing it, I'm not sure why you're blabbing this RX480 power limit disaster. Almost any GPUs will do these nowdays.fanofanand - Tuesday, August 2, 2016 - link

As someone who went from a 512MB 8800 GTS to an HD5850, I concur. ATI won that round and that's why I switched.KillBoY_UK - Monday, August 1, 2016 - link

More like 4, Since kepler they have had better PPW, just with maxwell the gap got alot widerTessellatedGuy - Monday, August 1, 2016 - link

Oh shit I've offended fanboys by saying the truth. Or maybe saying good things about nvidia isn't allowed because of their bad practices. C'mon, are you gonna be swayed to not buy an objectively better product just because the company is bad?