GIGABYTE Updates 4-GPU 2U G242 Server with Rome and PCIe4 for Ampere

by Dr. Ian Cutress on August 6, 2020 10:00 AM EST- Posted in

- Enterprise

- AMD

- Gigabyte

- A100

- NVIDIA

- Servers

- GIGABYTE Server

- Rome

- Ampere

As we wait for the big server juggernaut to support PCIe 4.0, a number of OEMs are busy creating AMD EPYC versions to fill that demand for high-speed connectivity. To date there have been two main drivers for PCIe 4.0: high-bandwidth flash storage servers, and high performance CPU-to-GPU acceleration, as seen with the DGX A100. As the new Ampere GPUs roll out in PCIe form, OEMs are set to update their portfolio with new PCIe 4.0-enabled GPU compute servers, including GIGABYTE, with the new G242-Z11.

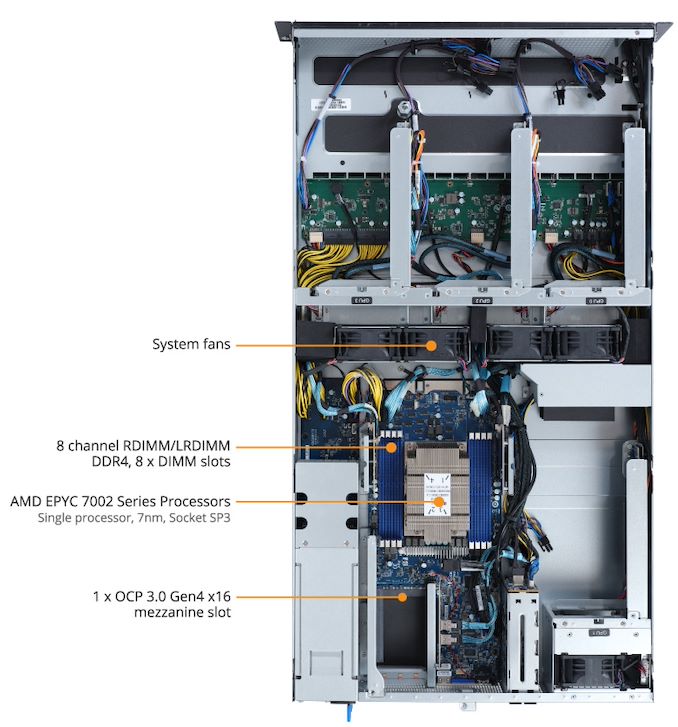

One of the key elements to a GPU server is enabling airflow through the chassis to provide sufficient cooling for as many 300W accelerators as can fit, not to mention the CPU side of the equation and any additional networking that is required. GIGABYTE has had some good success with its multi-GPU server offerings, and so naturally updating its PCIe 3.0 platform to Rome with PCIe 4.0 was the next step.

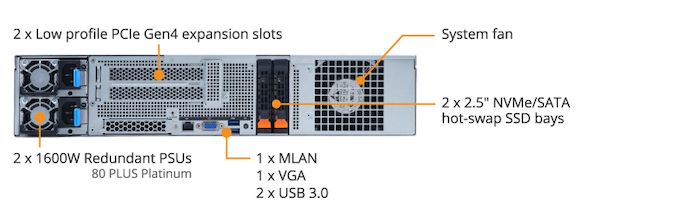

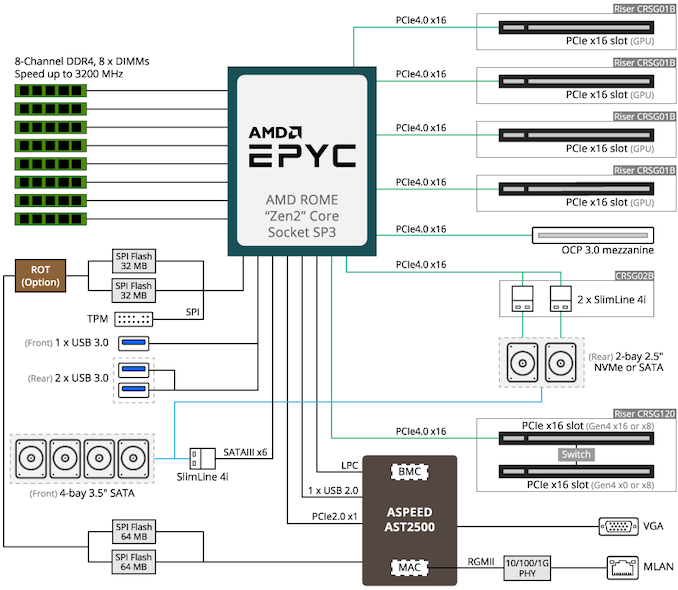

The G242-Z11 is a 2U rackmount server that supports four PCIe 4.0 cards with a full x16 link to each. That includes the new A100 Ampere GPUs, as well as AMD MI50 GPUs and networking cards. The system supports any AMD Rome EPYC processor, even the 280W 7H12 built for high-performance tasks, and also has support for up to 2 TB of DDR4-3200 across eight channels with the MZ12-HD3 motherboard.

With these sorts of builds, it is often the periphery that helps assist integration into current infrastructure, and on top of the four PCIe 4.0 accelerators, the G242-Z11 supports two low profile half-length cards and one OCP 3.0 mezzanine card for other features, such as networking. The G242-Z11 also has support for four 3.5-inch SATA pays at the front, and two NVMe/SATA SSD bays in the rear. Power comes from dual 1600W 80 PLUS Platinum power supplies. Remote management is controlled through the AMI MegaRAC SP-X solution, which includes GIGABYTE proprietary server remote management software platform.

The new G242-Z11 will be available from September. Interested parties should contact their local GIGABYTE rep for pricing information.

Source: GIGABYTE

Related Reading

- GIGABYTE’s 4U 10x NVIDIA A100 New G492 Servers Announced

- GIGABYTE Launches R161-Series Overclocking Servers: 1U, Core X, Liquid Cooling

- GIGABYTE Launches Two 4U NVIDIA Tesla GPU Servers: High Density for Deep Learning

- GIGABYTE's G190-G30: a 1U Server with Four Volta and NVLink

- GIGABYTE Server Shows Two-Phase Immersion Liquid Cooling on a 2U GPU G250-S88 using 3M Novec

13 Comments

View All Comments

PeachNCream - Thursday, August 6, 2020 - link

No, those fans will generate significant airflow, but that's quite an exaggeration.Spunjji - Friday, August 7, 2020 - link

I was thinking that, too. I doubt it will have a substantial impact for the most part. If it's really an issue for the end-user, though, then they could always individually test the GPUs and place the one with the best voltage characteristics in that location.Ktracho - Friday, August 7, 2020 - link

There are similar concerns with server boards that have two GPUs per board, where one GPU receives the hot air from the other GPU. The system has to be designed and tested to ensure both GPUs are within spec given the air flow the system provides. Sometimes air temperature depends on the position within a rack. Though not ideal, this type of situation is not uncommon.