Intel Alder Lake DDR5 Memory Scaling Analysis With G.Skill Trident Z5

by Gavin Bonshor on December 23, 2021 9:00 AM ESTScaled Gaming Performance: Low Resolution

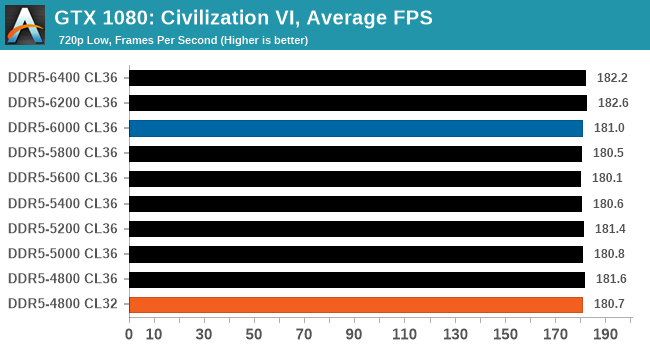

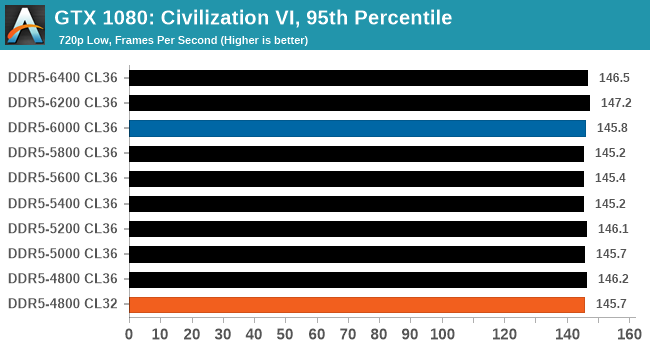

Civilization 6

Originally penned by Sid Meier and his team, the Civilization series of turn-based strategy games are a cult classic, and many an excuse for an all-nighter trying to get Gandhi to declare war on you due to an integer underflow. Truth be told I never actually played the first version, but I have played every edition from the second to the sixth, including the fourth as voiced by the late Leonard Nimoy, and it is a game that is easy to pick up, but hard to master.

Benchmarking Civilization has always been somewhat of an oxymoron – for a turn based strategy game, the frame rate is not necessarily the important thing here and even in the right mood, something as low as 5 frames per second can be enough. With Civilization 6 however, Firaxis went hardcore on visual fidelity, trying to pull you into the game. As a result, Civilization can taxing on graphics and CPUs as we crank up the details, especially in DirectX 12.

Blue is XMP; Orange is JEDEC at Low CL

Performance in Civ VI shows there is some benefit to be had by going from DDR5-4800 to DDR5-6400. The results also show that Civ VI can benefit from lower latencies, with DDR5-4800 at CL32 performing similar to DDR5-5400 CL36.

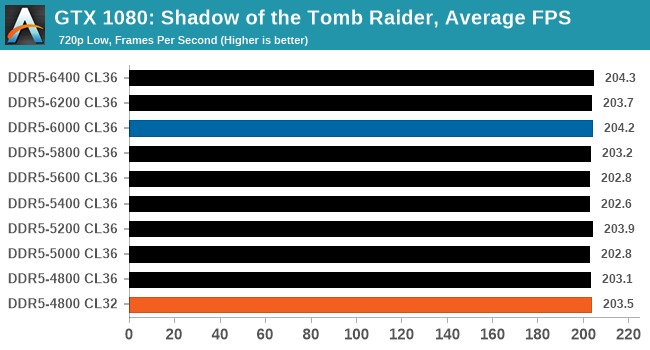

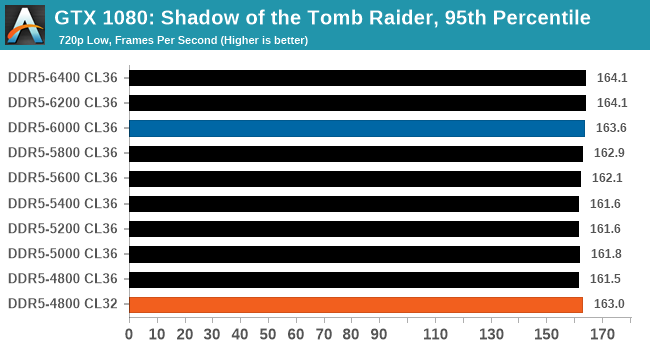

Shadow of the Tomb Raider (DX12)

The latest installment of the Tomb Raider franchise does less rising and lurks more in the shadows with Shadow of the Tomb Raider. As expected this action-adventure follows Lara Croft which is the main protagonist of the franchise as she muscles through the Mesoamerican and South American regions looking to stop a Mayan apocalyptic she herself unleashed. Shadow of the Tomb Raider is the direct sequel to the previous Rise of the Tomb Raider and was developed by Eidos Montreal and Crystal Dynamics and was published by Square Enix which hit shelves across multiple platforms in September 2018. This title effectively closes the Lara Croft Origins story and has received critical acclaims upon its release.

The integrated Shadow of the Tomb Raider benchmark is similar to that of the previous game Rise of the Tomb Raider, which we have used in our previous benchmarking suite. The newer Shadow of the Tomb Raider uses DirectX 11 and 12, with this particular title being touted as having one of the best implementations of DirectX 12 of any game released so far.

Blue is XMP; Orange is JEDEC at Low CL

In Shadow of the Tomb Raider, we saw a consistent bump in performance in both average and the 95th percentiles as we tested each frequency. Testing at DDR5-4800 CL32, we saw decent gains in performance over DDR5-4800 CL36, with the lower latencies outperforming DDR5-5800 CL36 in both average and 95th percentile.

Strange Brigade (DX12)

Strange Brigade is based in 1903’s Egypt and follows a story which is very similar to that of the Mummy film franchise. This particular third-person shooter is developed by Rebellion Developments which is more widely known for games such as the Sniper Elite and Alien vs Predator series. The game follows the hunt for Seteki the Witch Queen who has arisen once again and the only ‘troop’ who can ultimately stop her. Gameplay is cooperative-centric with a wide variety of different levels and many puzzles which need solving by the British colonial Secret Service agents sent to put an end to her reign of barbaric and brutality.

The game supports both the DirectX 12 and Vulkan APIs and houses its own built-in benchmark which offers various options up for customization including textures, anti-aliasing, reflections, draw distance and even allows users to enable or disable motion blur, ambient occlusion and tessellation among others. AMD has boasted previously that Strange Brigade is part of its Vulkan API implementation offering scalability for AMD multi-graphics card configurations. For our testing, we use the DirectX 12 benchmark.

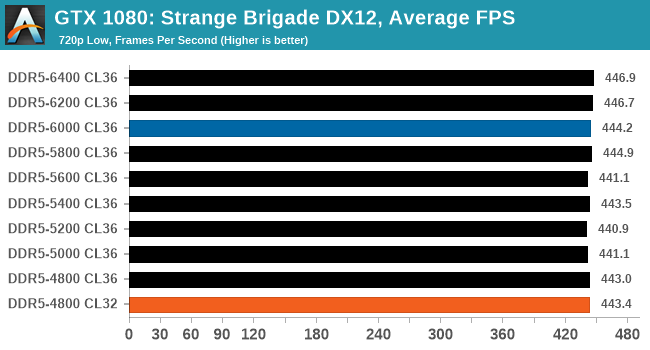

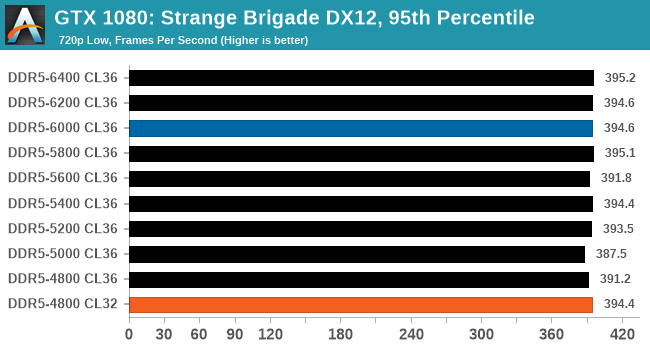

Blue is XMP; Orange is JEDEC at Low CL

Performance in Strange Brigade shows there is some benefit to higher frequencies, with DDR5-6400 CL36 consistently outperforming DDR5-4800 to DDR5-6200 CL36. The biggest benefit came in 95th percentile performance, with DDR5-4800 at lower latencies of CL32 coming close to DDR5-5800 performance.

82 Comments

View All Comments

Targon - Thursday, December 23, 2021 - link

Looking at these numbers, and how DDR5-5800 and DDR5000 both seem to have a performance penalty, but the other numbers aren't all that different implies something in the Alder Lake design or BIOS or SOMETHING isn't making very good use of the memory.Some may just chalk it up to the RAM, but I suspect it has more to do with Alder Lake itself supporting both DDR4 and DDR5 memory. At that point, I suspect the memory controller on the CPU and how it links to the CPU cores is at fault. If the memory controller were actually making better use of the changes in DDR5 compared to 4(dual 32 bit per clock eliminating certain wait states as one example), then we SHOULD see a definite improvement with better memory and not this trivial difference.

It will be interesting to see if AMD Zen4 Ryzen shows better scaling between memory speeds, because if there is, that will show very clearly how poorly Intel implemented DDR5 memory support. Just getting it working isn't the same as taking advantage of the benefits.

mikk - Thursday, December 23, 2021 - link

They didn't test the RAM, it's a GPU test. It's called GPU limit.haukionkannel - Friday, December 24, 2021 - link

AMD use most likely more cache, so the difference will be smaller.felixbrault - Thursday, December 23, 2021 - link

Why is Anandtech still using Windows 10 for testing?!?Ryan Smith - Thursday, December 23, 2021 - link

Windows 11 has been very, er, "quirky" to put it politely. We're keeping an eye on it and running it internally, but thus far we've found it to be rather inconsistent on performance benchmarks.In the interim, even when it behaves itself and doesn't halve our results for no good reason, we just end up with results similar to Windows 10. So there's no net benefit to using it right now.

Oxford Guy - Friday, December 24, 2021 - link

Probably because Windows 11 is worse than 10.Samus - Thursday, December 23, 2021 - link

So basically the same story as ever, good timings mean almost as much as frequency, and paying ultra premiums for frequency still don't net reasonable returns on the investment.As always, just stick with reliable, quality memory at JEDEC speeds and invest the savings elsewhere.

Oxford Guy - Friday, December 24, 2021 - link

‘quality memory at JEDEC speeds’Lol, no. Certainly not with a CPU like Zen 1. There was a huge difference between JEDEC and 3200-speed DDR-4, without a huge cost increase. Zen 3 is optimal at 3600. I would think you’re aware of the fabric speed being tied to the RAM speed, hence there being a price-performance sweet spot. That spot hasn’t been JEDEC for a long time.

Your point may apply to early adopter RAM at most. Once the DDR-5 market is more mature... In this situation it looks like getting Alder with DDR-4 capable of low latencies is most optimal. Too bad there’s no data on that here.

TheinsanegamerN - Monday, January 3, 2022 - link

JEDEC DDR3 was 1066, and later updated to 1333 when everyone was running 1866 or 2133.JEDEC ddr4 is 2133 mhz, up to 2666, when 3200-3600 is the performance sweet spot.

haukionkannel - Friday, December 24, 2021 - link

Well it seems that we are not memory speed bottle necked at the current state...And timings seems to affect more than pure band wide.