Intel Announces RealSense LiDAR Depth Camera for Indoor Applications

by Anton Shilov on December 11, 2019 11:00 AM EST

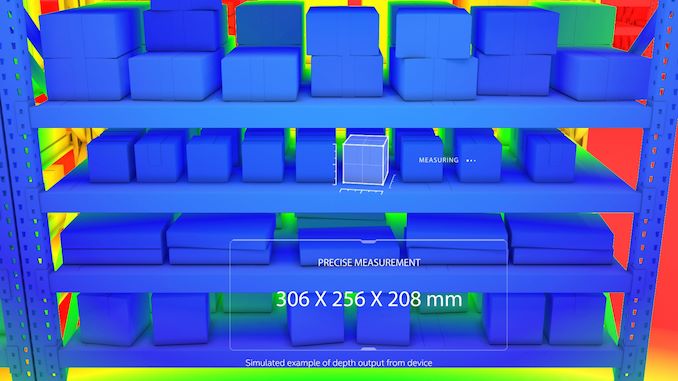

Intel has introduced the first member of its RealSense LiDAR family of depth cameras, the RealSense LiDAR L515. The sensor is primarily designed for indoor use, with Intel optimizing their to capture typical indoor ranges with a small, high accuarcy device.

Intel’s RealSense LiDAR L515 comes equipped with a 1920×1080@30Hz RGB sensor, a 1024×768@30Hz depth sensor, and the Bosch BMI085 inertial measurement unit (i.e., accelerometer and gyro). When it comes to physical capabilities, the RealSense LiDAR L515 has a range of 0.25 meters to 9 meters, a 70° ±3° × 43° ±2° RGB field-of-view as well as a 70°±2° × 55°±2° depth field-of-view. The LiDAR can scan a scene with up to 23 million points of depth data per second. Furthermore, the built-in vision processor can capture high paced scenes with minimal motion blur due to an exposure time of less than 100 ns and offloard appropriate processing from host saving battery life and improving performance.

The LiDAR L515 uses Intel’s proprietary MEMS mirror scanning technology that promises a better power efficiency than other time-of-flight technologies. In fact, Intel goes as far as claiming that at 3.5 W, its RealSense L515 is the most power efficient high-resolution LiDAR in the industry.

Intel’s RealSense LiDAR L515 measures 61 mm by 26 mm and weighs around 100 grams. The small dimensions and low weight make it possible to install the RealSense L515 into most devices that need to support navigation or gesture recognition. Furthermore, the LiDAR uses Intel’s open source Intel RealSense SDK 2.0, connecting back to its host via a USB 3.1 Type-C interface. The sensor is reportedly compatible with Android, Windows, macOS, and Linux, which makes it compatible with virtually all compute platforms available today.

Intel’s first RealSense LiDAR is currently available for pre-order directly from the company for $349.

Related Reading:

- Arm Unveils Arm Safety Ready Initiative, Cortex-A76AE Processor

- Imagination Launches PowerVR Automotive Initiative, 8XT-A GPU IP

- Investigating NVIDIA's Jetson AGX: A Look at Xavier and Its Carmel Cores

- Arm Announces Cortex-A65AE for Automotive: First SMT CPU Core

- Intel's Standalone 6DoF RealSense Tracking Camera T265 with Movidius Inside

Source: Intel

33 Comments

View All Comments

PeachNCream - Wednesday, December 11, 2019 - link

This seems like something Google would love to sell you in order to measure your bust size and then use it as the basis for spamming you with enhancement or reduction adverts after using it to violate your privacy on an on-going basis while suckering you into thinking you're getting some actual benefit from using it.Eliadbu - Wednesday, December 11, 2019 - link

In other words: mining data about you and your apartment than using it to for advertising or even worse selling it to 3rd party.Amandtec - Wednesday, December 11, 2019 - link

Google don't sell your data. You are thinking of Facebook.GreenReaper - Wednesday, December 11, 2019 - link

No, they sell access to match against it. So they effectively *rent* your data - to the highest bidder.dullard - Wednesday, December 11, 2019 - link

You aren't that far off: https://www.intelrealsense.com/naked-home-body-sca...mode_13h - Thursday, December 12, 2019 - link

That's surprising. I didn't want to click your link, so I searched it out and it appears to be legit.mode_13h - Thursday, December 12, 2019 - link

It's funny you say that, because Google actually had a phone platform that included a depth sensor, which they killed about 1.5 years ago.So, no. Google clearly does not care about accurate depth maps. If they want to estimate your bust size, they'll just have their deep learning algorithms look you over with your RGB camera.

Ikefu - Wednesday, December 11, 2019 - link

I wonder how this would do as a replacement for Xbox Kinect in hobby and academic projects now that the consumer grade Kinect is defunct.mode_13h - Thursday, December 12, 2019 - link

Not defunct: https://www.microsoft.com/en-us/p/azure-kinect-dk/...The sample images I've seen from it look very impressive. Plus, it supports sensor teaming for volumetric captures.

imaheadcase - Wednesday, December 11, 2019 - link

For a home user, what would this be practical for?